Tokyo would have to surmount a lot of obstacles—not least Western sanctions—if it wanted to return Russian oil imports to even modest pre-2022 volumes.

Vladislav Pashchenko

Source: Getty

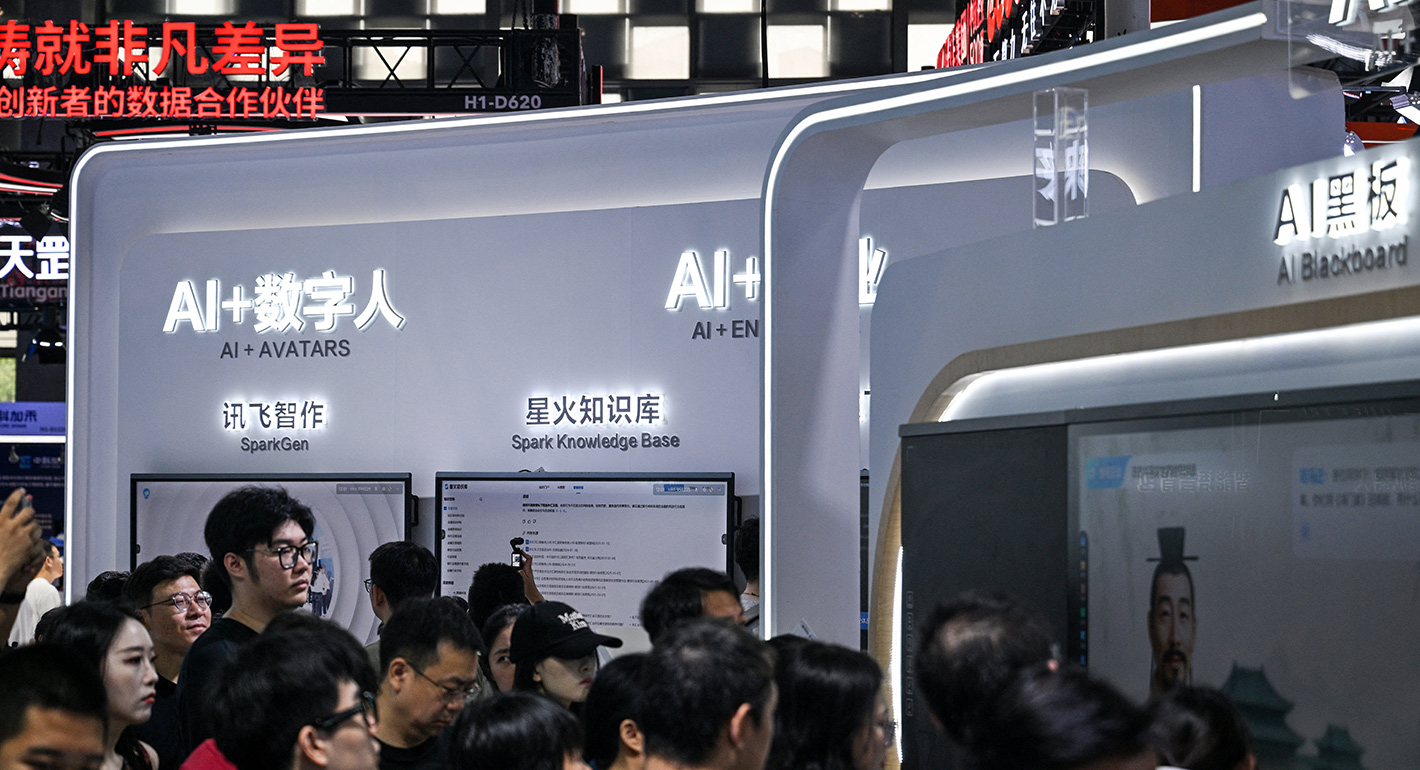

Censorship in China spans the public and private domains and is now enabled by powerful AI systems.

This essay is part of a series from Carnegie’s digital democracy network, a diverse group of thinkers and activists engaged in work on technology and politics. The series is produced by Carnegie’s Democracy, Conflict, and Governance Program. The full set of essays is scheduled for publication in summer 2026.

In September 2025, China’s leader, Xi Jinping, presided over a massive military parade featuring tanks, fighter jets, and missile launchers alongside “goose-stepping troops,” in the words of reporter David Pierson.1 Beijing’s aim was not only to demonstrate strength but also to showcase its alliances. Seated beside Xi were Russian President Vladimir Putin, North Korean leader Kim Jong Un, and senior Iranian officials. The display of solidarity among these governments was widely noted, with some observers describing them as the “Axis of Upheaval.”2 The phrase evoked memories of the Axis alliance in the Second World War and underscored the extent to which authoritarian governments are increasingly comfortable signaling their alignment on the world stage.

What captured international attention, beyond the weaponry and the guest list, was an unscripted exchange. During a live broadcast, as Xi and Putin walked together, microphones picked up a conversation about organ transplants and immortality.3 Putin suggested that organs from younger people could prolong vitality, while Xi referred to claims that human lifespans might stretch to 150 years.

Had this been a conversation between random passersby, it might have been dismissed as fantasy talk. But given the positions of the speakers—and longstanding allegations of forced organ harvesting in the PRC—the exchange drew widespread scrutiny.4 The video circulated quickly online, provoking debate before Chinese authorities requested media platforms to retract it.5

Despite Beijing’s request, the clip spread rapidly across international social media platforms, which were beyond their reach. Inside China, officials acted swiftly. Discussions on Chinese social platforms about “150 years old” and related topics initially spiked but were then suppressed.6

A user on X shared his experience on WeChat.7 He sent a message to a colleague joking about Xi’s reference to “immortality(長生不老).” The message appeared on the desktop version of the app but disappeared from his phone. His colleague did not receive the message at all. Another user tested the same phrase and confirmed that the message had been automatically censored.

Robust digital authoritarianism is now enabled by powerful AI systems.

Chinese authorities’ crackdown on this speech is a vivid reminder of how censorship in China spans the public and private domains. Such robust digital authoritarianism is now enabled by powerful AI systems.

In the early days of China’s internet, content moderation was carried out primarily by human reviewers employed by government agencies and technology firms. They monitored posts, flagged sensitive material, and deleted content deemed politically problematic.

As the number of internet users grew (reaching 1.05 billion by 2023),8 the sheer volume of material became impossible to manage through manual review alone. To meet this challenge, major platforms such as WeChat, Weibo, and Douyin adopted AI-driven censorship tools.9

These systems employ natural language processing, machine learning, sentiment analysis, and image-recognition technologies to scan vast amounts of text, photos, and videos in real time. They can automatically remove or block sensitive content, shift censorship from reactive deletion to proactive suppression, and reduce the time needed to enforce government directives.

Despite their capabilities, AI censorship systems face inherent limitations. Scholars describe these shortcomings as the “authoritarian data problem.”10 The more tightly a state represses speech, the less relevant data it generates for training its algorithms, resulting in biases and the incapacity to detect dissenting content.

The more tightly a state represses speech, the less relevant data it generates for training its algorithms.

Citizens, aware of the risks, adapt strategically: they self-censor and rely on coded language. This behavior reduces the amount of politically sensitive material available for AI training. As a result, the algorithm’s ability to distinguish between acceptable and censurable content is weakened. The challenge grows as users devise new homophones, euphemisms, puns, and cultural references designed to evade detection.

The dynamic mirrors biases seen in other AI applications, such as facial recognition, where unbalanced datasets lead to systematic inaccuracies. In censorship, repression itself produces the imbalance: the more dissent is suppressed and people refrain from expressing themselves, the fewer examples exist for machines to learn from.

To offset this shortfall, authoritarian governments might instead turn to international data. Posts from platforms like X or Facebook, generated in less restricted environments, provide a rich source of politically sensitive discourse. Incorporating such data might improve censorship algorithms by exposing them to patterns absent in domestic platforms.

Yet this approach also has limits. The language and style of political discussion inside China often diverge sharply from what appears on Western platforms. Coded speech used on Weibo or WeChat may not resemble posts on Twitter, making the imported data only partially useful. Academic studies simulating this technique have also found that the impact of patching data does not offset the constraint of the politically biased data.11

Outsourcing the generation of politically sensitive data to foreign workers also encounters difficulties. The coded language and slang employed by Chinese netizens often require deep cultural familiarity to decode. Without this knowledge, identifying subversive content becomes difficult.

For these reasons, AI has not eliminated the need for human censors.12 Instead, the two systems function together: AI tools conduct initial scans, filtering or flagging potentially sensitive material, while human reviewers make final judgments, particularly for ambiguous cases.

The latest report by InterSecLab analyzing leaked materials reveals how China exports its internet surveillance and censorship technologies to other countries.13 A key finding is the integration of AI at multiple layers of the censorship system. AI systems process vast volumes of network data, using pattern recognition, anomaly detection, and behavioral scoring to flag certain users or traffic patterns as “suspicious.” Human analysts then review these alerts, adjust the system’s sensitivity, and determine appropriate follow-up actions. This disclosure demonstrates both the scale of AI-driven automation in modern censorship regimes and the continued reliance on human oversight to calibrate and act upon AI-generated findings.

What remains unclear is whether the rise of AI has significantly reduced the overall costs of “stability maintenance,” otherwise known as public security expenditure. The financial burden of “stability maintenance” (維穩) has long been a focus for Beijing, and it has surpassed military spending in some years since 2009. A study by Taiwan’s Institute for National Defence and Security Research (INDSR) estimated China’s undisclosed “stability maintenance costs” for 2019 to be 1,331.7 billion yuan ($198.5 billion USD). This figure exceeds the country’s official military expenditure of 1,189.9 billion yuan ($177.3 billion USD).14 If expenses remain high, strains will grow as China’s economy slows. For the foreseeable future, human oversight will remain an integral part of the system, ensuring that the financial weight persists.

The ingenuity of human expression can still outpace the seemingly superior machine.

For Chinese dissidents, these discoveries offer a sliver of hope: the ingenuity of human expression can still outpace the seemingly superior machine. Creative acts of protest, for example using blank pieces of paper to protest strict COVID-19 measures, illustrate that the government can act sluggishly in the face of unprecedented expressions of dissent.15

AI cannot censor ideas it has never read, and this gap continues to encourage citizens to find new ways of speaking. For Chinese citizens operating on Western platforms, one strategy is to communicate as plainly as possible rather than imitating the coded homophones and wordplay common inside China. Doing so limits the regime’s ability to harvest more coded-language datasets of dissenting speeches to train its censorship AI system, thereby weakening the effectiveness of its censorship algorithms over time.

If economic pressures in China intensify, sustaining the apparatus could become more difficult. During periods of political unrest, when dissent rises and censorship demands increase, the risk of system overload also grows. Should AI systems underperform due to the emergence of new creative protest slogans and human moderators being insufficient due to financial constraints, opportunities for the broader circulation of sensitive information and social movements may emerge.

Nathan Law

Hong Kong democratic activist, former legislator

Nathan Law is a Hong Kong democratic activist and former legislator.

Carnegie does not take institutional positions on public policy issues; the views represented herein are those of the author(s) and do not necessarily reflect the views of Carnegie, its staff, or its trustees.

Tokyo would have to surmount a lot of obstacles—not least Western sanctions—if it wanted to return Russian oil imports to even modest pre-2022 volumes.

Vladislav Pashchenko

For the Middle Corridor to fulfill its promises, one of these routes must become scalable. At present, neither is.

Friedrich Conradi

Troubled by the growing salience of nuclear debates in East Asia, Moscow has responded in its usual way: with condemnation and threats. But by exacerbating insecurity, Russia is forcing South Korea and Japan to consider radical security options.

James D.J. Brown

Powerful lobbyists and inertia led to Russia’s coal-mining sector missing an excellent opportunity to solve its structural problems.

Alexey Gusev

Although Ukrainian strikes have led to a noticeable decline in the physical volume of Russian oil exports, the rise in prices has more than made up for it.

Sergey Vakulenko