Beijing’s AI diplomacy is pivoting from infrastructure and associated technical standards toward a more comprehensive effort aimed at recrafting global norms and institutions of AI governance.

Arindrajit Basu

{

"authors": [

"Mustafa Suleyman",

"Mariano-Florentino (Tino) Cuéllar",

"Ian Bremmer",

"Jason Matheny",

"Philip Zelikow",

"Eric Schmidt",

"Dario Amodei"

],

"type": "other",

"centerAffiliationAll": "",

"centers": [

"Carnegie Endowment for International Peace"

],

"collections": [

"Artificial Intelligence"

],

"englishNewsletterAll": "",

"nonEnglishNewsletterAll": "",

"primaryCenter": "Carnegie Endowment for International Peace",

"programAffiliation": "",

"programs": [],

"projects": [],

"regions": [],

"topics": [

"Technology",

"AI"

]

}

Source: Getty

IPAIS would offer opportunities for collaboration to inform policymakers and the public on issues of AI safety.

There is a pressing need for fresh thinking on AI safety, security, and governance in order to reduce the risks of frontier AI technology and expand its benefits for the world. This proposal offers a list of key design principles for a new organization—an International Panel on AI Safety (IPAIS)—inspired by the Intergovernmental Panel on Climate Change (IPCC). This body would focus on assessing and validating the scientific evidence supporting a deep technical understanding of current AI capabilities, their improvement trajectories, and the relevant safety and security risks. With grounding in science, independence from political interference, and an international membership, IPAIS would present an opportunity for robust international collaboration to inform policymakers and the public. If successful, this institution could pave the way for further cooperation.

A new AI safety organization should satisfy at least the following key design principles:

IPAIS would fill the need for an objective, in-depth, expert-led understanding of where AI capabilities today and where they are headed. It would regularly and independently evaluate the state of AI, its risks and potential impacts, and estimated timelines for technological milestones. It would keep tabs on both technical and policy solutions to alleviate risks and enhance outcomes. Initially, IPAIS would be a purely fact-finding exercise giving comprehensive clarity on the state of AI and its trajectory, uses, and so on. It would focus, as much as possible, on objective, commonly agreed metrics and indicators—and where they are absent, it would help produce them.

IPAIS will complement a larger ecosystem of AI cooperation activity, including a network of safety institutes to perform research directly, the Frontier Model Forum, a host of voluntary measures, and various national-level initiatives. IPAIS will augment these activities by filling a need for dissemination of validated research that can build consensus about the most important risks to be mitigated so humanity can realize the benefits of AI, identification of key questions that merit further research, and building a community of scientists and technical experts who can collaborate and learn from one another.

Further discussion can help address the goals of procuring sustainable, long-term funding for financial flexibility; achieving broad international buy-in; ensuring the governance structure serves the organization’s purposes; and escaping politicization and controversy by developing measures to buttress the organization’s credibility.

Mustafa Suleyman

President, Carnegie Endowment for International Peace

Mariano-Florentino (Tino) Cuéllar is the tenth president of the Carnegie Endowment for International Peace. A former justice of the Supreme Court of California, he has served three U.S. presidential administrations at the White House and in federal agencies, and was the Stanley Morrison Professor at Stanford University, where he held appointments in law, political science, and international affairs and led the university’s Freeman Spogli Institute for International Studies.

Ian Bremmer

Jason Matheny

Philip Zelikow

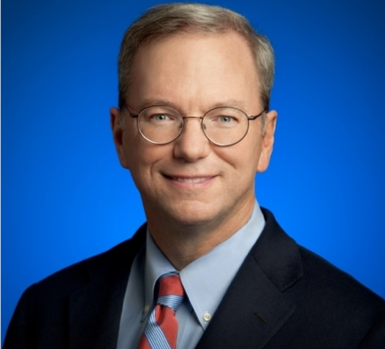

Eric Schmidt

Eric Schmidt is the chairman of the National Security Commission on Artificial Intelligence. Previously, he served as the CEO of Google from 2001 to 2011.

Dario Amodei

Carnegie does not take institutional positions on public policy issues; the views represented herein are those of the author(s) and do not necessarily reflect the views of Carnegie, its staff, or its trustees.

Beijing’s AI diplomacy is pivoting from infrastructure and associated technical standards toward a more comprehensive effort aimed at recrafting global norms and institutions of AI governance.

Arindrajit Basu

Democratic institutions currently lack the capacity needed to govern AI-augmented deliberation in ways that serve democratic imperatives.

Micah Weinberg

The U.S.–India semiconductor cooperation story is well-stocked with top-level strategic intent. What remains unresolved, however, are some underlying challenges that will determine whether the cooperation actually functions. Three such friction points stand out.

Shruti Mittal

Previous dialogues ended in failure. This time could be different.

Scott Singer

Why the outcomes of the U.S.-China meetings may be limited.

Aaron David Miller, David Rennie