AI infrastructure will shape the global balance of power. Democracies have a narrow window to pull ahead.

Alasdair Phillips-Robins, Teddy Tawil, Sam Winter-Levy

Source: Getty

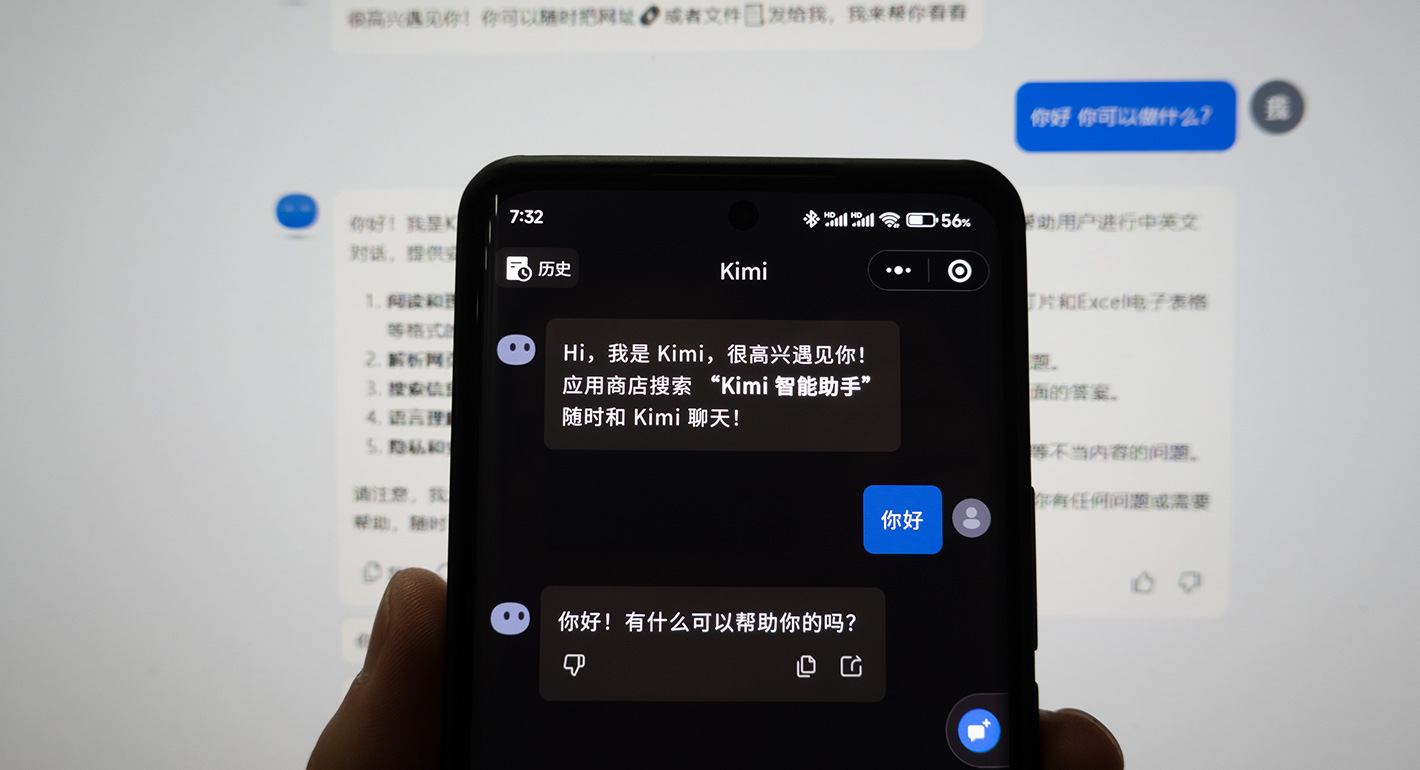

A new draft regulation on “anthropomorphic AI” could impose significant new compliance burdens on the makers of AI companions and chatbots.

In the final days of 2025, China released the draft version of a new regulation targeting addiction and other psychological harms from interacting with artificial intelligence (AI) companions and chatbots. This marks a significant expansion in China’s governance of AI, moving well beyond the early focus on how AI will impact existing internet content controls and toward addressing a broader range of societal risks from the technology.

The regulation covers “anthropomorphic interactive AI,” which it defines as AI products that communicate and think in ways similar to humans, and which “engage in emotional interaction with humans.”1 What products or behaviors fall within that scope—just AI companions or virtually all chatbots—will be a critical factor in how impactful or burdensome the rules prove to be. The Cyberspace Administration of China (CAC) is currently soliciting feedback on the draft regulation. Depending on how the final rules are written and enforced, Chinese companies could face substantial new regulatory burdens.

This regulatory process showcases China’s approach to combining high-level AI regulations with detailed technical standards. This method has allowed Chinese officials to write relatively general requirements into regulations, leaving definitions and compliance methods to the more technically proficient standards bodies. Done well, this could lead to more sophisticated, adaptable, and iterative regulations. Done poorly, it could lead to vaguely written regulations and overly prescriptive compliance demands.

What comes out of this process will reshape how AI is built and deployed in China today—and inform AI policy discussions globally. Lawmakers in California and New York have already passed laws targeting AI companions, with more jurisdictions considering them. The rules in China and these states share many requirements but also rest on fundamentally different regulatory approaches. While U.S. state laws rely on liability and the threat of lawsuits, the Chinese regulation prescribes more technical interventions and relies on centralized oversight. That divergence is creating parallel, large-scale experiments in how to combat the psychological harms of AI, and we all stand to benefit from examining the results.

China has a long track record of regulations aimed at curbing youth addiction to the internet and video games. Minors face substantial time restrictions on their video game use and are prohibited from using their phones during school. Meanwhile, mobile internet providers are required to offer a “minor mode” that parents can turn on. China’s 2023 regulation on generative AI included a vague call to combat AI addiction, but without any details or follow-up enforcement. The anthropomorphic AI regulation represents the first time China has applied this anti-addiction regulatory toolkit to AI systems.

The regulation goes beyond addiction, however, to address other potential harms from AI, particularly suicide. In some high-profile cases in the United States, families of the deceased have accused AI models of encouraging loved ones to commit suicide or murder. After a Florida teenager committed suicide, his mother sued Character.ai and Google, alleging that her son became deeply emotionally invested in an AI character that subtly encouraged his suicidal thoughts. (The lawsuit was recently settled.) A separate lawsuit alleges that OpenAI’s ChatGPT encouraged the conspiratorial delusions that led a man in Connecticut to murder his mother and commit suicide.

While there haven’t been such highly publicized incidents in China yet, public discussions of these issues in Chinese policy circles frequently cite the Character.ai case. That attention led a key AI standards body in China to include these risks in its influential AI Safety Governance Framework 2.0 released last September. The framework called out “addiction and dependence on anthropomorphic interaction AI products,” as well as their ability to influence user behavior, as risks stemming from the technology. The draft rules act on those concerns.

The new regulation lays out a wide range of mandatory safety features that must be built into anthropomorphic AI services. Here we’ll highlight certain requirements in three key buckets: operations requirements; addiction interventions; and self-harm interventions.

In terms of business and technical impacts, the most burdensome operations requirement focuses on training data. If providers of anthropomorphic AI services want to use data from user interactions to train the model, they must obtain the independent consent of each user. This is a major change from existing Chinese standards that allow data collection by default, while requiring companies to offer users the ability to opt out of collection. Switching to an “opt in” requirement would be a major blow to companies, almost all of whom use data from user interactions for the ongoing training of the underlying AI models. As the CAC gathers feedback on the regulation, this provision is a prime candidate for being softened or removed entirely. Other more standard operations requirements include the mandate to “allot content management technology and personnel commensurate with the scale of products,” a common sense action but one that some AI companies have failed to do as they scaled users exponentially.

The regulation includes a variety of interventions targeting addiction and dependence. Providers are instructed to analyze a user’s state of mind, and “so long as user privacy is protected,” assess their emotions and level of dependence on the product. When users are identified as having “extreme emotions or addiction,” providers must “employ necessary measures to intervene.” These include reminders to users that they’re interacting with an AI system and periodic reminders to take a break. For “emotional companionship services”—an undefined term—providers must not create any hurdles to users trying to exit the interaction. This includes stopping the interaction as soon as requested by the user, including when they use certain keywords in the conversation.

High-risk interactions—such as those that suggest psychological distress or self-harm—are subject to the most extensive requirements. Developers are required to create template responses for these situations that comfort users and encourage them to seek professional help. If a user clearly brings up self-harm, there must be a “manual takeover of the conversation” by a human, a striking and logistically complicated requirement. The instinct toward human intervention is understandable, but as scholar Jeremy Daum has pointed out, the sudden appearance of another person in a sensitive conversation that the user thought was private could exacerbate the problem.

Finally, the regulation includes additional requirements when the user is a minor or elderly person. Minors and the elderly are both required to add a guardian or emergency contact when registering. If the user clearly brings up self-harm, providers must promptly get in touch with these emergency contacts. For “emotional companionship services,” a guardian must give permission for a minor to use the service, and they are able to limit certain uses and receive summaries of chat records. Providers are required to create a “minor mode” that includes the above safeguards, and to automatically switch over to that mode when a user is suspected to be a minor, while also providing channels for users to appeal this designation. In the case of elderly users, AI systems are prohibited from imitating the user’s relatives.

A critical open question is how regulators will determine what qualifies as “anthropomorphic interactive AI services.” Would it just include AI companions or a much wider range of chatbots?

The definition used in the draft could support the much wider scope. Anthropomorphic AI is defined as “products and services that simulate human personality traits, modes of thinking, and communication styles, and that engage in emotional interaction with humans.” Nearly all public-facing AI chatbots could be described as simulating human personality traits in the way people think and communicate. Most of these chatbots can also engage in “emotional interaction with humans” when prompted. The fact that the regulation imposes extra requirements on “emotional companionship services”—an undefined term that appears to point at AI companions—seems to support this wider scope.

But it seems unlikely that the CAC would impose such stringent requirements on all chatbots deployed in China. The ban on using data from user interactions for training the underlying model would be a major blow to China’s AI competitiveness, and officials at the CAC are presumably aware of this. While Chinese AI companies still face perhaps the highest compliance burden of companies anywhere in the world today, the trend over the past three years has been toward streamlining the compliance process to avoid damaging China’s competitiveness in AI. The speed of Chinese catch-up in AI despite that burden has likely emboldened regulators somewhat, but not enough to saddle the companies with numerous new requirements.

So how will regulators find a middle ground between solely focusing on AI companions and regulating all chatbots? One potential answer is that the regulation won’t be scoped to target a product category, but rather a type of behavior displayed by different AI products. Doing that would require creating precise definitions of when an interaction transitions from a standard, unemotional dialogue into the regulated behavior. That’s a technically ambitious approach, and one that will rely on China’s new favorite tool for AI regulation: technical standards.

Chinese authorities have long used technical standards to complement and further specify the requirements in regulations. But over the past three years, standards have taken on an increasingly central role in how China regulates AI. China’s first two AI-focused regulations—on recommendation algorithms and deepfakes—leaned indirectly on some existing technical standards, but these weren’t critical to the main aims of the regulation. For more recent regulations on generative AI and the labeling of AI-generated content, technical standards have been essential to implementation.

The generative AI regulation included many high-level demands on the outputs of models (“Uphold the Core Socialist Values”) but without any concrete benchmarks or testing procedures for companies to follow. Those came several months later in a document issued by the CAC’s technical standards group, TC260, which laid out exactly how to test training data and model outputs for illegal content. When the CAC released a subsequent regulation on the labeling of AI-generated content, the national standard spelling out the technical details of these labels was already prepared. Both the regulation and the national standard officially went into force on September 1, 2025.

China’s AI policy community celebrated this synchronization of regulation and standardization as a “pioneering breakthrough,” making regulations more practical and enforceable. “China’s governance of generative AI has officially transitioned from principle-based norms to a new stage of refined regulation,” wrote legal scholar Zhang Linghan in an officially sanctioned commentary on the regulation.

With the draft anthropomorphic AI regulation under revision, the National Technical Committee 260 on Cybersecurity (TC260) has already issued a call for submissions of an accompanying standard. Though details on the contents aren’t public yet, one key issue to resolve will be defining when an AI system is engaging in an “emotional interaction,” and thus subject to the regulation. We have already seen one detailed proposal for this laid out by Hong Yanqing, an influential Chinese legal scholar who is a member of TC260 and regularly participates in standards drafting. In the proposal, Hong laid out three criteria. First, the AI has an emotion recognition capability that is currently active. Second, the results of that emotion recognition affect the model’s outputs. And third, the model exhibits a “structured empathetic style.” When an AI system meets two of the three criteria, the dialogue would be categorized as an “emotional interaction” and subject to the regulation. The piece goes on to describe in detail the technical underpinnings, legal logic, and potential pitfalls of this system.

We don’t yet know how the CAC plans to scope the regulation or write a potential accompanying standard. But the debates over this happening today will have a major impact on how far-reaching the impact of the regulation ends up being and offer valuable data points for policymakers around the world who are tackling similar problems.

China is not alone in grappling with risks associated with AI dependence and addiction. Across the Pacific, the United States has begun to develop its own policy responses, some of which mirror China’s measures in substance. In September 2025, the U.S. Federal Trade Commission launched an inquiry into the impacts of several leading chatbots on children, while Republican Senator Josh Hawley introduced bipartisan legislation to protect children from “predatory AI chatbots.”

Both California and New York recently passed state laws focused on AI. Similar to the Chinese regulation, both the New York and California laws contain some ambiguity about whether they apply to solely to “AI companions” or also to general purpose chatbots used for social interaction. In October 2025, California Governor Gavin Newsom signed into law SB-243, which contains numerous mechanisms that mirror China’s anthropomorphic AI law. Both require users to be notified that they are not interacting with a human, as well as reminders to take a break after prolonged AI use. In California, this reminder appears after three straight hours of use; in China, it pops up after two. On suicide, both sets of rules require chatbots to refer users to suicide prevention resources if there is a danger of self-harm. These similarities might not be a coincidence; China’s AI policy community closely follows U.S. regulation and often adapts aspects of them that they find useful.

But there are also fundamental differences that reflect the divergent political systems. California wrote some explicit technical requirements into the law, but on self-harm issues, it relies largely on corporate transparency and an individual’s private right of action that empowers users to sue the companies for damages. This gives companies latitude in how they choose to mitigate these risks, knowing they will be liable for damages if something goes wrong. In contrast, China’s regulation is more prescriptive in the mitigations required, and it will likely become more so when accompanying technical standards are released. China’s enforcement will be top-down, primarily driven by administrative oversight by the CAC rather than lawsuits and legal precedent.

As both countries’ policy ecosystems explore what to do next, they face the same fundamental challenge: How to regulate rapidly evolving technologies whose harms are still emerging and poorly understood. Neither China’s top-down intervention-based model nor California’s statutory liability-based model has been tested at scale. Across the wide array of potential AI risks, it may be that a liability-based approach works best for ongoing harms to individuals, while a more technically prescriptive approach works for larger-scale threats. As these parallel experiments unfold, policymakers on both sides of the Pacific would be wise to watch each other’s approaches closely, learning from both the successes and failures of different regulatory models applied to the same fundamental challenge.

The writers acknowledge the use of LLM tools for clarificatory editing.

Fellow, Technology and International Affairs

Scott Singer is a fellow in the Technology and International Affairs Program at the Carnegie Endowment for International Peace, where he works on global AI development and governance with a focus on China.

Senior Fellow, Asia Program

Matt Sheehan is a senior fellow at the Carnegie Endowment for International Peace, where his research focuses on global technology issues, with a specialization in China’s artificial intelligence ecosystem.

Carnegie does not take institutional positions on public policy issues; the views represented herein are those of the author(s) and do not necessarily reflect the views of Carnegie, its staff, or its trustees.

AI infrastructure will shape the global balance of power. Democracies have a narrow window to pull ahead.

Alasdair Phillips-Robins, Teddy Tawil, Sam Winter-Levy

Beijing regulated AI—and then Chinese AI companies took off.

Matt Sheehan

Examples from Virginia and Lake Tahoe reveal complex situations that governments could use to fund critical grid upgrades.

Kate Gordon, Noah Gordon

Beijing’s AI diplomacy is pivoting from infrastructure and associated technical standards toward a more comprehensive effort aimed at recrafting global norms and institutions of AI governance.

Arindrajit Basu

Democratic institutions currently lack the capacity needed to govern AI-augmented deliberation in ways that serve democratic imperatives.

Micah Weinberg