The coming decade will require technology companies to decide how nuclear fits into their energy strategies—and grapple with the obligations that follow.

John Pendleton, Mackenzie Schuessler

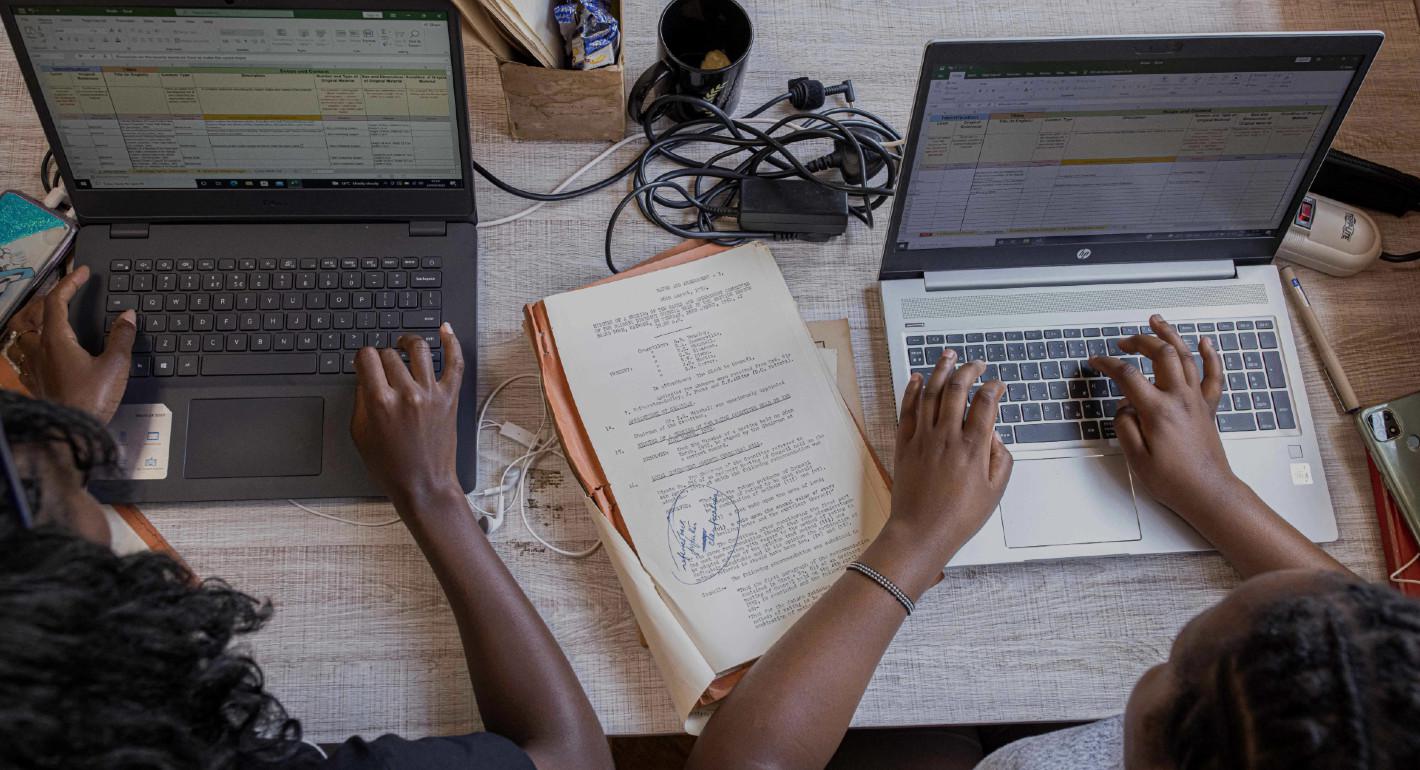

Source: Getty

International AI governance enshrines assumptions from the more well-resourced Global North. These efforts must adapt to better account for the range of harms AI incurs globally.

The design, development, and deployment of artificial intelligence (AI), as well as its associated challenges, have been heavily mapped by the Global North, largely in the context of North America. In 2020, the Global Partnership on AI and The Future Society reported that of a group of 214 initiatives related to AI ethics, governance, and social good in thirty-eight countries and regions, 58 percent originated in Europe and North America alone.1 In 2022, North America accounted for almost 40 percent of global AI revenue with less than 8 percent of the global population.2 But this geographic skew in AI’s production and governance belies the international scale at which AI adoption is occurring. Consequential decisions about AI’s purpose, functionality, and safeguards are centralized in the Global North even as their impacts are felt worldwide.

International AI governance efforts often prescribe what are deemed “universal” principles for AI to adhere to, such as being “trustworthy”3 or “human-centered.”4 However, these notions encode contexts and assumptions that originate in the more well-resourced Global North. This affects how AI models are trained and presupposes who AI systems are meant to benefit, assuming a Global North template will prove universal. Unsurprisingly, when the perspectives and priorities of those beyond the Global North fail to feature in how AI systems and AI governance are constructed, trust in AI falters. In the 2021 World Risk Poll by the Lloyd’s Register Foundation, distrust in AI was highest for those in lower-income countries.5 As AI developers and policymakers seek to establish more uniformly beneficial outcomes, AI governance must adapt to better account for the range of harms AI incurs globally.

Building on exchanges with expert colleagues whose work concerns the Global Majority (see below for a definition), this work offers a framework for understanding and addressing the unique challenges AI poses for those whose perspectives have not featured as prominently in AI governance to date. We offer three common yet insufficiently foregrounded themes for this purpose.

These challenges are often both conceptually and practically interdependent They are also systemic in nature with roots long predating AI. They will therefore not be resolved quickly. But progress toward trustworthy AI will hinge on a clear understanding of the ways in which current systems impede trust in AI globally. From this understanding, we propose a range of recommendations that seek to address these concerns within the boundaries of what’s achievable at present. The range of actions needed is wide and spans stakeholder groups as well as geographical locales—those in the Global North as well as the Global South can do more to ensure that AI governance establishes trust for all. The paper offers actions that governments in the Global North and the Global South should consider as they seek to build greater trust in AI amidst the limitations of global institutions.

Moreover, many of the ways that AI threatens to harm the Global South are not unique to geographic regions or even necessarily to certain political systems. These harms stem from structural inequalities and power imbalances. Thus, many of the harms identified in this work may be prevalent in Africa, Latin America, or Southeast Asia—but they may also be prevalent among marginalized communities in Europe or North America. The unifying factor comes down to where power resides and who has the agency to raise the alarm and achieve redress when harm occurs.

For this reason, we chose to frame this work in terms of how AI affects not just the Global South, but the Global Majority. This term refers to the demographic majority of the world's population—communities that have experienced some form of exploitation, racialization, and/or marginalization in relation to the Global Minority. According to Rosemary Campbell-Stephens,6 Global Majority is a collective term referring to “people who are Black, Asian, Brown, dual-heritage, indigenous to the global south, and or have been racialized as ‘ethnic minorities.’” Specifically, in this work, we use the terms Global Majority and Global Minority when discussing individuals and communities that exist within contexts of historical power disparities; we use the terms Global South and Global North when addressing nation-states and institutions.

Experts have articulated the urgent need to more intentionally include priorities from the Global South in institutional AI governance efforts, recognizing the Global North’s centricity in global AI governance. There is broad recognition that a failure to include a majority of world perspectives will set the stage for AI to entrench global inequality. The recent AI resolution adopted by the United Nations (UN) General Assembly, a resolution that the United States put forward and that over 100 countries co-sponsored, underscores the need for equitable distribution of AI’s benefits across all nations. Many well-intentioned leaders recognize inclusion as a crucial first step. But more equitable AI demands increased representation paired with a deeper understanding of the global range of risks and opportunities that AI governance must address. If AI governance systems continue to be built upon miscalculations of the benefits and risks AI imposes on the Global Majority, policymakers risk undermining trust in AI globally, effectively worsening inequities and harms rather than safeguarding against them.

Achieving economic and societal benefits from AI requires understanding and acting on the wide range of opportunities and risks that AI poses in different environments worldwide. When algorithms that are designed to drive efficiency in the Global North are deployed to the Global South without their designers appropriately considering the context, they are far more likely to fail outright. Premising the exporting of these tools on the creation and use of more locally representative datasets can help to stave off these types of failure. Being more attuned to varying local contexts also makes for more robust AI models. For example, in Tanzania, where more than 95 percent of the roadways are partially paved dirt roads, the government turned to AI-enabled tools to help prioritize their budget allocations for road maintenance.7 However, existing algorithms fell short, as they were trained on “smooth roads in the United States and Europe.” The government partnered with research scientists to curate a hand-labeled training dataset, unique and representative of Tanzania’s roadways, to train an algorithm from scratch.

Perceptions of risk depend greatly on context as well. Key AI risks facing the Global South today are often far less anchored around Global North–led notions of AI safety related to systemic failures; rather they are rooted more in long-standing patterns of extraction, exclusion, or marginalization. Many in the Global South face the unique risk of being left behind in a digital revolution while also being locked into a global AI supply chain that extracts value for foreign benefit with limited accountability.8 Contextual differences between the Global North and Global South influence AI’s potential to cause benefit or harm. Foregrounding these differences will be key to understanding what leads to unique AI governance priorities between the two.

Reliance on existing AI governance defined largely by the Global North risks exporting ill-fitted technological solutions and hard-to-implement governance models to the Global South.25 Building more universally responsive approaches to AI governance will require more sincere engagement with the ways trust in AI is challenged in the Global Majority. In this paper, we have organized these harms into three categories: harms arising from the centralization of AI production in the Global North, harms due to a failure to account for AI’s sociotechnical nature across diverse social contexts, and harms arising from practical and policy roadblocks preventing abstract principles from translating to global practice.

Much of the development of AI has emerged from the tech hubs of the Global North, particularly Silicon Valley. Those developing AI technology have decisionmaking power around when to release builds of AI models, whom to build for, and how to derive profit.26 Multinational corporations, especially those headquartered in the United States, thus play an outsize role in influencing the AI ecosystem worldwide. As of 2024, some of the world’s biggest technology corporations—Apple, Amazon, Alphabet, Meta, Microsoft, and NVIDIA—now each hold so much power that their market capitalizations are larger than the gross domestic product (GDP) of any single African country.27 When combined, their market capitalizations are greater than the GDP of any single country except for the United States and China. Centralization in both model development and data creation and storage contribute to a skewed distribution of AI capacity worldwide.28

For countries beyond the Global North, adopting AI has often meant taking on a consumer role. AI applications are increasingly being developed in, by, or for those beyond the Global North, but the Global South’s market share is not commensurate with its potential market size: China and India were the only Global South countries listed alongside economies in the Global North on one tech website’s list of the top-ten economies worldwide with the most AI start-ups.29 Those in the Global South thus often play the role of AI consumers, with those in the Global North serving as AI producers. As a result, those in the Global South frequently make do with AI models built for a foreign or inappropriate context—models that are air-dropped in with insufficient support for right-sizing.

But AI reflects the priorities and idiosyncrasies of its producers. AI model developers choose to optimize their models for certain functions, like maximizing profit versus equity of outcomes. They choose benchmarking tests or decide when an AI model is field-ready.

AI model developers must also decide what accuracy rates or thresholds to accept. For example, what is an acceptable level of accuracy in a model that predicts who will gain access to credit? Even defining accuracy or error for a model is not a neutral task, because it involves encoding values about whether erring in one direction is better than another. In a model that predicts a medical patient’s likelihood of having tuberculosis, should model developers seek to minimize the number of false positive diagnoses or the number of false negative diagnoses? Is it considered worse to alarm a patient unnecessarily or to miss a chance to alert them to potentially life-threatening developments?

None of these choices will be made independently of a person’s morals or ethics, which are invariably shaped by their social and cultural context. These, and many other critical choices made in an AI value chain, depend on people, and people reflect the norms, values, and institutional priorities in their societies.

Centralization of production can translate to centralization of power in consequential decisions. When those impacted by AI aren’t connected to those designing and governing AI systems, harm is more likely to arise. For example, in the United States a lack of diversity in the AI workforce has long been cited as a concern.30 Commercially developed algorithms used by the U.S. government to support healthcare decisions were found to be riddled with racial bias, systematically reducing the number of Black patients identified for necessary care by more than half.31

Centralized AI production also has geopolitical ramifications. It can undermine importing countries’ autonomy and agency in ostensibly domestic choices. On the one hand, a country’s ability to attract a company like Google or Microsoft to set up an AI research hub is often perceived as critically important for propelling economic growth. On the other hand, courting Big Tech’s investments may threaten long-term goals of establishing locally sustained or sovereign digital infrastructure for public benefit. This is especially so if contracts or business agreements favor extraction of resources (like data) and talent rather than reinvestment32—a dynamic all too familiar in postcolonial environments.

Governments may also worry that by implementing policies that protect their publics from dubious or outright harmful actions,33 they could be driving much-needed investment from the local economy or exacerbating the precarity of their citizens’ livelihoods. Fundamentally, it is a wholly different task for countries to engage with the leviathans of Big Tech as a consumer rather than as a producer. Governments must weigh whether a policy stance encourages or discourages potentially catalytic investment by these heavy hitters.

Individual consumers and others in civil society in the Global South also struggle to address issues with the centralization of AI production in Global North countries. These struggles arise less from geopolitical power dynamics, and more from practical roadblocks like organizational structure.

Multinational corporations often base their central headquarters in the United States with regional offices operating around the world. Regional hubs often focus on tactical operations or commerce-specific priorities for the country context. Conversely, central headquarters are where more global matters like policy development and teams tasked with promoting trustworthy or responsible AI are developed, often in the United States.

When consumers in the Global South try to reach corporate actors—whether for investigating concerns or even seeking redress from harm—they are often met with regional representatives whose portfolios have little, if anything, to do with cross-cutting issues like those underpinning algorithmic harm. Even well-meaning local respondents will be misplaced to address common AI grievances. Organizational structure thus serves as a barrier to more effective redress.

For years, openness has offered one approach at a software-centered solution to address the centralization of technological power.34 Crucially, for those in the Global Majority, openness in software reduces barriers to entry for smaller players and offers a practical solution to exploitative practices stemming from vendor lock-in. This happens when a technology company, often a foreign one, locks a country into extractive data-sharing or infrastructure-provision arrangements, undermining a project’s directional independence and financial sustainability as well as a country’s sovereignty over its (digital) resources. Openness, therefore, addresses the power asymmetries arising from having just a handful of large, well-resourced corporations setting the terms for these models’ evolution in different parts of the world.

Openness in AI has served to benefit communities in the Global Majority. This rhetoric of openness in AI has resulted in tentative successes. These include the release of LLMs in under-represented or low-resource languages tailored for country- or region-specific markets. There have been at least six LLMs developed locally in India to cater to the plethora of languages and dialects spoken by the country’s more than 1 billion people.35 Likewise, in Southeast Asia, the release of SeaLLM and Southeast Asian Languages in One Network (SEA-LION), trained on ten and eleven regional languages respectively, including English and Chinese,36 has been accompanied by a number of similar initiatives in Indonesia 37 and Vietnam38 exclusively focused on local languages and linguistic nuances.

With 40 percent of models today produced by U.S.-based companies,39 and with many existing models trained on the English language40 (with OpenAI self-reporting a U.S. bias41), the development of local LLMs is both a recognition of, and a response to, the underrepresentation of “low-resource” languages in machine-learning construction. It asserts representation and agency in an ecosystem dominated by a few firms on distant shores.

But “openwashing”—appearing to be open-source while continuing proprietary practices42— can perpetuate power imbalances. The openness of AI that has, on the surface, allowed for these contextual modulations lacks definitional clarity. It is contested in both interpretation and in practice, especially with the consolidation of big players in the AI sector.43 The Open Source Initiative, which maintains the definition of open source from the period of the open-source software movement of the 1990s, conducts an ongoing project to define open-source AI in a globally acceptable manner. One team of researchers has argued that distinguishing between the different forms of openness—whether merely an offer of an API or full access to source code—matters because it avoids “openwashing” systems that should instead be considered closed.44

A closer look reveals a persisting concentration of power among a few (mainly U.S.-based) companies.45 These market incumbents largely provide the developmental frameworks, computational power, and capacity underpinning the gradations of open AI. Indeed, SeaLLM and SeaLLM Chat were pre-trained on Meta’s Llama 2. Although Meta advertised Llama 2 as an open-source LLM, many others have refuted that claim because of its licensing restrictions.46

With AI, openness may even result in more, not less, centralization. Because Meta’s PyTorch and Google’s TensorFlow dominate pre-trained deep learning models, these companies are afforded a greater commercial advantage when developers create systems that align with theirs. As David Gray Widder and his coauthors observe, TensorFlow plugs into Google’s hardware calibrated for tensor processing units, which lies at the heart of its cloud computing service.47 This in turn allows Google to further market its commercial cloud-for-AI products.

It is also these market incumbents that have the necessary resources to provide and prioritize access to computational power and capacity. In some cases, specialized or proprietary hardware and software may be needed to optimize performance. OpenAI’s Triton, a Python-like programming language, is pitched as open source. Yet, it currently only works on NVIDIA’s proprietary graphics processing units.48 Current approaches to AI governance that push openness as a salve for power imbalances overlook these important dynamics.

Just as the Global South is not a monolith, neither is the global tech industry. Many companies based in the Global North have embraced openness with the aim of promoting broader access for philosophical or practical purposes, not just a profit motive. And companies have long invested resources in both addressing harms (with dedicated teams focused on responsible AI) and increasing benefits in the Global Majority (for example, through “AI for Good”49 efforts).

The forces driving multinational companies are complex and multidimensional; their false oversimplification risks undermining effective approaches for improving engagement with the Global Majority. But today’s AI industry is central to the maintenance of the status quo that has given rise to many of the outlined challenges experienced by those in the Global Majority.

AI is inherently sociotechnical. AI systems are social technologies: people and their social norms and mores inform every element of the AI value chain. And AI often directly influences people, shaping their behaviors and experiences. This creates an ongoing feedback loop, where AI is inseparable from the society that creates it. For example, human behavioral data informs recommender algorithms that in turn influence consumer behavior online. Viewing AI systems as inherently intertwined with the contexts in which they originate and operate helps illuminate the range of harms facing the Global Majority.

AI exports both technical and sociotechnical functionality to the Global Majority. AI delivers sizable profits to those spearheading the technology’s development and delivers a first mover advantage. This confers technological supremacy—the nation(s) with the most advanced technology outpaces its (or their) competitors in a very traditional sense. It also delivers something we refer to as sociotechnical supremacy, in that the values, biases, and worldviews of AI developers can become subtly embedded and then exported in AI models and the datasets upon which these models are based.

The global export of AI effectively translates to the export of various social assumptions and cultural norms via something akin to an AI-based version of a Trojan horse.50 This positions AI leaders to establish the de facto starting point for future advances. And due to AI’s increasing ubiquity in consequential decisionmaking, behavior-nudging, and market-shaping efforts, it has far-reaching impacts. As AI spreads, AI superpowers’ sociotechnical supremacy effectively works in tension with those who desire greater autonomy or diversity in how decisions, behaviors, and markets evolve worldwide.

Cultural norms or values embedded in AI may reinforce determinations of what are deemed desirable or undesirable behaviors simply by dint of how or where an AI model was trained. For example, generative AI text-to-image systems like Midjourney, DALL-E, and Stable Diffusion have been found to produce highly stereotypical versions of the world.51 Search terms like “Indian” or “Indian person” produced images of older men in turbans, whereas similar searches for Americans produced predominantly White women with an American flag as their backdrop.52 Experts suggest that this is probably due to “an overrepresentation of women in U.S. media, which in turn could be reflected in the AI’s training data.”53 Global Majority actors often face harms arising from exclusion or misrepresentation in the AI development process along these lines.

Integration of faulty AI into quotidian but socially critical systems can irreparably undermine trust. Experts from the Global Majority have illustrated the scope and pervasiveness of these types of harms, highlighting “ordinary harms” that arise as people interact with AI systems in their daily lives.54 For example, when an algorithmic decisionmaking tool in India designed to detect welfare fraud removed 1.9 million claimants from the roster, when a sample of 200,000 individuals was reanalyzed, 15,000 of them (about 7 percent) were incorrectly removed due to the faulty predictions by the algorithm.55

The scale of populations impacted by AI systems includes individuals directly implicated in such AI-driven decisions and everyone else dependent on such individuals. The impact of harm here is both increased economic precarity and exacerbated distrust in AI systems and in the institutions that deploy them. Trust is universally threatened when mistakes are made by a person or by a machine. But the potential and scale of AI-borne distrust are magnified, as AI operates at a larger, systemic level; one algorithmic error can tip the scales for swaths of people.

In the above example, frontline government workers, preferring to trust the algorithms, did not believe citizens who furnished documentation to prove that they were, indeed, eligible for welfare.56 AI authority—the tendency of people to overestimate AI’s accuracy, underplay its capacity for making errors, and legitimize its decisionmaking—poses more severe risks for those who already struggle to have their grievances acknowledged in an analog world. Those who aren’t well-served by the status quo face a higher burden of proof when seeking to change or challenge an AI-enabled decision. Moreover, the degree of authority conferred to AI often differs meaningfully across cultures and across countries, foregrounding the importance of socially contextualizing AI governance. 57

These concerns have arisen with many other digital tools deployed as solutions that users initially trust, only to face trust breakdowns when failures inevitably occur. AI does not pose altogether new challenges in this regard; it threatens to increase the scale and burden of their reach. These trust breakdowns stand to hinder AI’s future use and adoption. For instance, citizens in India grew skeptical of the state-deployed public redressal platforms designed to address civic complaints, choosing to rely on trusted offline mechanisms or engaging directly with municipal employees.58

Data divides also lead to harm in sociotechnical systems. Acknowledging the importance of representative data has emerged as a shared sociotechnical challenge. Often, communities, groups, and even countries in the Global Majority lack a digital record they could even suggest to be used for tuning imported models, let alone a record to create indigenous models with. For example, African dialects and languages are significantly underrepresented in the broad body of training data that natural language processing (NLP) algorithms rely on. Despite there being over 200 million Swahili speakers and 45 million Yoruba speakers worldwide, the level of representation for these languages in the broad body of online language data that feeds NLP models is paltry.59 Funders, nongovernmental organizations, and corporations have begun trying to address this skew in representation through dedicated data creation and curation efforts, but demand still far exceeds supply.60

But once created, data’s social and financial value can create tensions for marginalized communities. The global AI supply chain risks perpetuating a model of resource extraction for the Global Majority in terms of how data are sourced and shared. Especially as organizations seek to extend the benefits of generative language models to unrepresented or underrepresented communities, AI will require more data sources specific to those communities. Even as many impatiently push for this outcome of more globally inclusive language models, tensions around data extractivism have emerged. This is especially pronounced for communities who have worked to preserve agency over their communal knowledge in the face of colonization,61 or those who recognize that language, culture, and politics are inextricably linked,62 and the mishandling of such communal knowledge is quite consequential. For example, will knowledge from indigenous communities be misappropriated for commercialization, such as for the creation of new drugs?64 These patterns of extraction have plagued the Global Majority for years, and AI stands poised to deepen them, fomenting distrust if these patterns are not explicitly addressed and protected against.

Data underpinning AI is often social and political. Politics and culture can shape data collection in sometimes surprising ways, as data fields embed social customs or power structures. For example, data on financial records may describe who has formal assets while failing to capture assets of those who don’t interact with formal financial institutions. Census data describes which ethnicities live together in which neighborhoods. Swapping data from one context to represent another overlooks these important nuances.

Data collection methods can also influence, and be influenced by, who has more power or social capital in a community. For example, survey enumerators’ own gender can influence gender balance or candor in survey responses.63 In some cultures, a male enumerator necessitates engagement with a male head of household, whereas a female enumerator would engage with women. Social norms manifest subtly in data, representing different samplings of reality. Models built from these data will reflect cultural and societal phenomena unique to the contexts from which they are drawn.

Cultural differences affect data as well. For example, in some communities, counting one’s children is considered bad luck, so seemingly neutral data fields in a household survey systematically depict reality inaccurately. If model developers build AI lacking awareness of how these untruths may distort the underlying data, they build AI on shaky foundations. Assuming neutrality or scalability of AI across contexts with widely varying cultures or political influences means mischaracterizing how society shapes AI.

AI models’ benefits may not directly or fully translate across social contexts. Platform companies, such as Uber, that use algorithmic management tools have disrupted informal labor markets in the Global Majority, offering regular work and structured income that has reportedly allowed drivers to make better financial decisions64 for a more secure future.65 But exporting AI without appropriately acknowledging its sociotechnical qualities may also destabilize extant social structures and exacerbate harm for users.

For example, experts from Southeast Asia note how algorithmic management tools used by ride- hailing platforms like Uber undermine the interpersonal nature of traditional taxi networks. Uber’s algorithm was originally designed to provide highly individualized, piecemeal work in the Global North to supplement users’ earnings during a recession.66 The model was then exported to countries where cab drivers are part of an established informal industry reliant on a strong underlying labor union network.67 This type of task-matching algorithm, which originally catered to more individualized at-will work structures, led to disintermediation in the Global Majority, dismantling workers’ ability to self-organize for collective bargaining and address common grievances stemming from the algorithm’s opaque decisionmaking.

AI governance globally has demanded more accessible models, emphasizing the need for technical explainability to counter the opaque nature of many of today’s AI models. But a hyperfocus from Global North researchers on the technical manifestations of explainability as disjointed from the sociotechnical leads to fixes that fall short of achieving governance goals.

The process behind AI-driven decisionmaking remains illegible for many in the Global Majority who interact with these systems exclusively as consumers.68 Legibility, or explainability, is often defined primarily in terms of the person closest to the model, and models’ designers are often inaccessible for questioning by those in the Global Majority. This leaves users in the Global Majority with limited visibility into why AI may have predicted an outcome, as well as whether the outcome was accurate. And when algorithmic error leads to faulty decisions—as is common for models taken too far beyond their testing grounds—users in the Global Majority have little recourse to interrogate these faulty decisions. If model limitations are not effectively understood across contexts—and unless these limitations also are effectively communicated by developers across contexts—trustworthiness will not be attained.69

AI systems produced in and for the Global Minority currently form the basis for much of the AI that reaches the Global Majority. As detailed above, various kinds of harm frequently arise when a model’s assumed context is misaligned from its actual context.

In one example from Kenya cited by Jake Okechukwu Effoduh, cattle herders used a U.S.-designed image vision model to identify malnourishment in their livestock. The software repeatedly misdiagnosed malnourishment in Kenyan cattle because it mistakenly based its assumptions of livestock’s healthy weight on that of American cattle.70 The tool had been trained with data on Western Holstein, Angus, or Hereford breeds, whose optimal weight was higher than that of leaner local breeds like Boran and Sahiwal. However, interviews with cattle herders using the tool in Kiambu County showed that they were not aware of these discrepancies in how the model had been trained, and the model’s repeated misdiagnoses led to mistrust in the AI system.

In this case, if the original model’s developers had visibility into how cattle weights translated to model weights, influencing the model’s predictions, this would be deemed explainable AI. But the developers’ ability to access an explanation like this fell short of achieving explainability in practice. For the model to be trusted, those using it (the herders, in this case) must also be prioritized in determining how explainability is achieved in practice. The herders were furthest removed from the model’s development but closest to feeling the impacts of the model’s predictions. These models were meant to inform rearing practices for their herds, decisions with livelihood-shaping consequences. Had key aspects of the model been more accessible to the herders, their ability to intuit the veracity of the model’s assessments would have been improved. This would have impacted the degree to which the model was seen as explainable and trustworthy.

Transparency in tech has proven widely beneficial for years. Many in the Global Majority have long viewed, and even pushed for, an open approach to source codes, models, and data as a means to give a platform to local ideas in a digital commons. By democratizing access to information and leveraging knowledge networks around the world, openness advances the innovation landscape by encouraging testability, collaboration, and interoperability.

Openness has underpinned vital research with benefits the world over, such as the Canadian company BlueDot’s use of the common AI practice of text and data mining (TDM) to rapidly analyze public data to predict the early spread of the coronavirus pandemic.71 TDM processes invariably include copyrighted works that sometimes have exceptions in place for public benefit research. While some countries allow copyright exceptions for TDM use in public research, copyright restrictions are prevalent in the Global South.72

But Global Majority researchers face disproportionate burdens around openness. Such researchers who seek to leverage open models and access open datasets often face additional barriers in navigating copyright restrictions. For example, no Latin American country currently provides TDM exceptions to copyrighted works for the purpose of research, prompting activism around the Global South’s right to research. Experts view the growing number of Latin American countries drafting national AI strategies as an opportunity to include TDM exceptions.73 In another example, Masakhane, a network of African language NLP researchers, began efforts to leverage a publicly available corpus of Biblical texts published in more than 300 languages.74 This was an invaluable resource for improving NLP functionality across many under-resourced African languages. But while the texts were publicly available, their copyright was held privately by U.S.-based Jehovah’s Witnesses. Masakhane ultimately faced copyright restrictions when seeking formal access for model development purposes, therefore creating further hurdles for NLP work on the continent.75 These types of barriers are commonplace for Global Majority researchers.

Many in the Global Majority have also seized on the openness movement to augment availability of data to build LLMs indigenously or fine-tune open models to better serve local languages. Existing open LLMs rely primarily on two databases. One is a compilation of scraped web data for public use like Common Crawl. The other is The Pile, which is an 825-gigabyte, English-text corpus comprising twenty-two smaller datasets from professional and academic sources.76 Some communities underrepresented in these types of open datasets have worked to include their own data in order to ensure that models based on these resources better serve their communities. Examples include adding lower-resourced languages into an NLP corpus or region-specific crop data to a global agricultural database. In communities with rich histories of oral rather than written traditions, accurately recording such data can incur additional costs both quantifiable and not. Apart from recording equipment, there may also be a need for transcription and interpretation expertise.

The process of building and releasing these datasets is often presented as a contribution to the public good. But it does not fully account for the financial and personnel costs involved. The tech community’s expectation that such datasets will be donated for the public good—data often compiled over generations in the case of indigenous communities, without adequate compensation or ownership mechanisms—can be especially sensitive when data originates from marginalized communities with long, painful histories of colonization or oppression.77 What is more, these histories also color the worldwide reception of English-dominant LLMs. Colonizers often relied on the tactic of weaponizing language to deprive communities of their means of cohesion or identity.78 This involved imposing a colonial language while suppressing local languages and dialects, whether through formal institutions like schools or through persecution and abuse,79 leading to language being referred to as a “war zone.”80 These historical and political undertones continue to find resonance as the Global Majority interacts with tools that some portray as a new form of colonization.81

In some instances, indigenous communities have managed to reclaim rightful control of their own data even as they continue to struggle82 against open-source appropriation enabled by the systemic inequities that privilege market incumbents.83 In other instances, indigenous knowledge sits at an awkward crossroads between AI and notions of intellectual property that prioritize the individual rather than community and that put corporations and commercial interests ahead of relationships.84

As Chijioke Okorie and Vukosi Marivate succinctly convey, there are sayings in the Igbo and Setswana languages “that speak to how discussions about taking (or bringing) often revolve around other people’s property. . . . People always recommend the sharing of property when such property is not theirs.”85

Opening up the knowledge database of communities in the Global Majority may well benefit them in unprecedented ways. But it may also result in their loss of agency due to more fundamental, structural barriers propping up the AI ecosystem explained above. These reminders underscore just how sidelined traditional or cultural knowledge and its stewards have been in the development of AI and the regulations that have evolved alongside this knowledge and those who steward it.

Many of the challenges mentioned would benefit greatly from well-known solutions such as increasing both the international and domestic funding available for developing enablers of the AI ecosystem across the Global South. Far more is needed to build inclusive digital infrastructure, more representative data resources, and a more diverse global AI workforce and AI-fluent global citizenry. Each of these things can, and must, be prioritized in pursuit of more trustworthy AI. Yet these actions are not straightforward and continue to prove incredibly difficult to achieve.

Given the rate at which AI is proliferating worldwide, we must explore how the international community can consider additional approaches that would allow AI to merit global trust even as other relevant and fundamental goals of digital inclusion remain stubbornly out of reach.

AI’s power derives from its ability to discern patterns unique to the datasets it has been trained on and its ability to generate predictions or novel content based on those historical patterns. These patterns often concern human behavior, choices, politics, prejudices, and even missteps or errors that need correcting. How the world governs AI must acknowledge the range of ways these truths intersect with society.

There is undoubtedly much to gain from the strength of existing multilateral approaches to establishing responsible AI frameworks and beneficial global practices, such as the OECD’s AI Principles or the UN Educational, Scientific and Cultural Organization’s Recommendation on the Ethics of AI. The UN’s recently launched High-Level Advisory Board on AI moves even closer to a more representative deliberative body on AI governance. But even as these efforts have begun to acknowledge the importance of increasing inclusivity and global representation, there remains a poor understanding of what issues most need addressing to help those living beyond the Global North to trust AI.

Establishing trust in AI globally is a daunting task. As we seek to frame this research in service to that broader goal, we can draw lessons in global relativism from how the world has approached instituting safeguards for technologies that predated AI tools like ChatGPT. For example, cars must have seatbelts and brakes, and roads have enforceable rules. These measures have the general effect of building trust in technologies so that they are adopted more broadly and widely used. But anyone who has traveled much can recount tales of how road safety is defined quite differently across contexts. Moments perceived as dangerous or death-defying in a vehicle in the Global North may not cause much consternation if, instead, they occurred on the roads of Hanoi or Addis Ababa. Each jurisdiction puts in place rules that roughly track across countries but that are differently enforced, differently prioritized, and differently suited to the context at hand. (In the road example, for instance, one must consider whether cars are sharing the road with tuk-tuks and livestock.)

AI is, therefore, both local and global in consequential ways—many global AI models are highly interconnected, sociotechnical systems. They encode rules and information about their society of origin or use in nonexplicit ways. Rules of the road in one jurisdiction become (often unknowingly or unconsciously) embedded in AI that is then exported to other countries. And there is currently no agreed-upon framework for ensuring that AI systems built to preserve social norms in Detroit function appropriately in Dhaka or vice versa.

The challenges detailed in this work arise against a trust deficit that long predates AI—the backdrop of colonization, racism, and histories of exploitation and uncompensated or undercompensated extraction all inform what trust entails for the Global Majority. These historical and political dynamics are much larger than AI alone, and they will not be reformed or righted through AI. But AI’s sociotechnical nature threatens to reinforce these dynamics if such outcomes are not explicitly guarded against. Similarly, AI’s benefits will depend on how proactively and effectively risks are mitigated. There is a global thirst for AI that stands to release an era of more equitable progress if trust in AI can be attained and maintained.

The long-term risks and benefits of AI will quite likely impact people all around the world, and they will pose unique challenges that observers cannot yet understand. But there is significant reason to think that humanity will be better off in the future if stakeholders work to get AI governance right today. For those in the Global Minority who are genuinely interested in arriving at a convergence on trust with the Global Majority, one of the most powerfully obvious yet enduringly stubborn ways of narrowing the existing gap is to carefully listen to the lived experiences that, in turn, shape the priorities of the world’s largest populations. It behooves those closest to the centers of power—like governments of the Global North—to meaningfully engage those representing the Global Majority. Addressing the roots of distrust today would carry over into safeguards far more robust and enduring in the future, online and offline. We hope this piece adds to that evolving conversation.

1 “Report Release with the Global Partnership on AI (GPAI) on Responsible AI and AI in Pandemic Response,” The Future Society, December 17, 2020, https://thefuturesociety.org/report-release-with-the-global-partnership-on-ai/.

2 “North America Population 2024,” accessed April 26, 2024, https://worldpopulationreview.com/continents/north-america-population.

3 Nikki Pope, “What Is Trustworthy AI?,” NVIDIA Blog, March 1, 2024, https://blogs.nvidia.com/blog/what-is-trustworthy-ai/.

4 “The World Is Split on Its Trust in Artificial Intelligence,” The Lloyd’s Register Foundation World Risk Poll, accessed April 26, 2024, https://wrp.lrfoundation.org.uk/news-and-funding/the-world-is-split-on-its-trust-in-artificial-intelligence/.

5 “The World Is Split on Its Trust in Artificial Intelligence.”

6 Rosemary Campbell-Stephens, “Global Majority Leadership,” ROSEMARY CAMPBELL STEPHENS - the Power of the Human Narrative (blog), April 22, 2022, https://rosemarycampbellstephens.com/global-majority-leadership/.

7 Asad Rahman, “On AI and Community Owned Data,” Asadrahman.Io (blog), April 6, 2024, https://asadrahman.io/2024/04/06/on-ai-and-community-owned-data/.

8 Karen Hao [@_KarenHao], “Update: This Is Worse than I Thought. Workers in Nigeria, Rwanda, and South Africa Are Also Reporting That They Are Being Blocked from the Website or Cannot Create an Account. It’s Possible That Scale AI Suspended Remotasks Far beyond Just Kenya.,” Tweet, Twitter, March 18, 2024, https://twitter.com/_KarenHao/status/1769664691658428539.

9 “AI Compute Definition – Data Management Glossary,” Komprise, accessed April 26, 2024, https://www.komprise.com/glossary_terms/ai-compute/.

10 Rose M. Mutiso, “AI in Africa: Basics Over Buzz,” Science 383, no. 6690 (March 28, 2024): eado8276, https://doi.org/10.1126/science.ado8276.

11 “Widening Digital Gap between Developed, Developing States Threatening to Exclude World’s Poorest from Next Industrial Revolution, Speakers Tell Second Committee | Meetings Coverage and Press Releases,” accessed April 26, 2024, https://press.un.org/en/2023/gaef3587.doc.htm.

12 Danni Yu, Hannah Rosenfeld, and Abhishek Gupta, “The ‘AI Divide’ between the Global North and Global South,” World Economic Forum, January 16, 2023, https://www.weforum.org/agenda/2023/01/davos23-ai-divide-global-north-global-south/.

13 “The Global AI Talent Tracker 2.0,” MacroPolo, accessed April 26, 2024, https://macropolo.org/digital-projects/the-global-ai-talent-tracker/.

14 Tim Keary, “Top 10 Countries Leading in AI Research & Technology in 2024,” Techopedia (blog), November 13, 2023, https://www.techopedia.com/top-10-countries-leading-in-ai-research-technology.

15 Rebecca Leam, “Generative AI in Low-Resourced Contexts: Considerations for Innovators and Policymakers,” Bennett Institute for Public Policy (blog), June 26, 2023, https://www.bennettinstitute.cam.ac.uk/blog/ai-in-low-resourced-contexts/.

16 Libing Wang and Tianchong Wang, “Small Language Models (SLMs): A Cheaper, Greener Route into AI | UNESCO,” November 30, 2023, https://www.unesco.org/en/articles/small-language-models-slms-cheaper-greener-route-ai.

17 Karen Hao and Andrea Paola Hernandez, “How the AI Industry Profits from Catastrophe,” MIT Technology Review, accessed April 26, 2024, https://www.technologyreview.com/2022/04/20/1050392/ai-industry-appen-scale-data-labels/.

18 Vittoria Elliott and Tekendra Parmar, “‘The Despair and Darkness of People Will Get to You,’” Rest of World, July 22, 2020, https://restofworld.org/2020/facebook-international-content-moderators/.

19 Billy Perrigo, “Inside Facebook’s African Sweatshop,” TIME, February 14, 2022, https://time.com/6147458/facebook-africa-content-moderation-employee-treatment/.

20 Deepa Parent and Katie McQue, “‘I Log into a Torture Chamber Each Day’: The Strain of Moderating Social Media,” The Guardian, September 11, 2023, sec. Global development, https://www.theguardian.com/global-development/2023/sep/11/i-log-into-a-torture-chamber-each-day-strain-of-moderating-social-media-india.

21 Elizabeth Dwoskin, Jeanne Whalen, and Regine Cabato, “Content Moderators at YouTube, Facebook and Twitter See the Worst of the Web — and Suffer Silently,” Washington Post, July 25, 2019, https://www.washingtonpost.com/technology/2019/07/25/social-media-companies-are-outsourcing-their-dirty-work-philippines-generation-workers-is-paying-price/.

22 Jibu Elias, “How AI Can Transform Rural Healthcare in India,” INDIAai, accessed April 26, 2024, https://indiaai.gov.in/article/how-ai-can-transform-rural-healthcare-in-india.

23 “Getting Real About AI for the Bottom-of-the-Pyramid: Improving the Economic Outcomes of Smallholder Farmers in Africa,” Digital Planet (blog), accessed April 26, 2024, https://digitalplanet.tufts.edu/getting-real-about-ai-for-the-bottom-of-the-pyramid/.

24 “IFAD Highlights the Transformative Power of Innovation for Small-Scale Farmers,” IFAD, accessed April 26, 2024, https://www.ifad.org/en/web/latest/-/ifad-highlights-the-transformative-power-of-innovation-for-small-scale-farmers.

25 See Bulelani Jili and Esther Tetruashvily, in a forthcoming paper from the Carnegie Endowment for International Peace, 2024.

26 Ilan Strauss et al., “To Understand the Risks Posed by AI, Follow the Money,” The Conversation, April 10, 2024, http://theconversation.com/to-understand-the-risks-posed-by-ai-follow-the-money-225872.

27 MarketScreener, “The Largest Seven U.S. Tech Companies Have More Financial Power than Almost Any Country in the World - MarketScreener,” February 19, 2024, https://www.marketscreener.com/quote/stock/TESLA-INC-6344549/news/The-largest-seven-U-S-tech-companies-have-more-financial-power-than-almost-any-country-in-the-world-45981954/.

28 “Data-Justice-Policy-Brief-Putting-Data-Justice-into-Practice.Pdf,” accessed April 26, 2024, https://gpai.ai/projects/data-governance/data-justice-policy-brief-putting-data-justice-into-practice.pdf.

29 Lyle Opolentisima, “The Top 10 Countries Leading the AI Race | Daily Infographic,” Daily Infographic | Learn Something New Every Day, January 17, 2024, https://dailyinfographic.com/the-top-10-countries-leading-the-ai-race.

30 Karen Hao, “AI’s White Guy Problem Isn’t Going Away,” MIT Technology Review, accessed April 26, 2024, https://www.technologyreview.com/2019/04/17/136072/ais-white-guy-problem-isnt-going-away/; Sinduja Rangarajan, “Bay Area Tech Diversity: White Men Dominate Silicon Valley,” Reveal, June 25, 2018, http://revealnews.org/article/heres-the-clearest-picture-of-silicon-valleys-diversity-yet/.

31 Ziad Obermeyer et al., “Dissecting Racial Bias in an Algorithm Used to Manage the Health of Populations,” Science 366, no. 6464 (October 25, 2019): 447–53, https://doi.org/10.1126/science.aax2342.

32 “Kenya Becomes the First Country to Suspend Sam Altman’s Worldcoin A.I.-Crypto Scheme,” Fortune, accessed April 26, 2024, https://fortune.com/2023/08/02/kenya-first-country-to-suspend-worldcoin-altman-privacy-orb-iris-crypto-ai/.

33 Perrigo, “Inside Facebook’s African Sweatshop.”

34 Paul Keller and Alek Tarkowski, “The Paradox of Open,” accessed April 26, 2024, https://paradox.openfuture.eu/.

35 Himanshi Singh, “India’s AI Leap 🇮🇳 : 10 LLMs That Are Built in India,” Analytics Vidhya (blog), December 21, 2023, https://www.analyticsvidhya.com/blog/2023/12/llms-that-are-built-in-india/.

36 “Alibaba Cloud: Cloud Computing Services,” AlibabaCloud, accessed April 26, 2024, https://www.alibabacloud.com; Nithya Sambasivan et al., “Re-Imagining Algorithmic Fairness in India and Beyond” (arXiv, January 26, 2021), https://doi.org/10.48550/arXiv.2101.09995; “Aisingapore/Sealion,” Shell (2023; repr., AI Singapore, April 29, 2024), https://github.com/aisingapore/sealion.

37 “Indosat Ooredoo Hutchison and Tech Mahindra Unite to Build Garuda, an LLM for Bahasa Indonesia and Its Dialects,” accessed April 26, 2024, https://www.techmahindra.com/en-in/indosat-techm-to-build-garuda-llm-for-bahasa-indonesia/; “Launches LLM Komodo-7B, Yellow.Ai Provides 11 Of Indonesia’s Regional Languages,” accessed April 29, 2024, https://heaptalk.com/news/launches-llm-komodo-7b-yellow-ai-provides-11-of-indonesias-regional-languages/; “WIZ.AI Unveils First Foundation Large Language Model (LLM),” WIZ.AI, January 4, 2024, https://www.wiz.ai/wiz-ai-unveils-southeast-asias-first-foundation-large-language-model-llm-for-bahasa-indonesian-amplifying-regional-representation-in-mainstream-ai/.

38 Minh Thuan Nguyen et al., “ViGPTQA - State-of-the-Art LLMs for Vietnamese Question Answering: System Overview, Core Models Training, and Evaluations,” in Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing: Industry Track, ed. Mingxuan Wang and Imed Zitouni (EMNLP 2023, Singapore: Association for Computational Linguistics, 2023), 754–64, https://doi.org/10.18653/v1/2023.emnlp-industry.70.

39 Anisa Menur, “How SEA-LION Aims to Bridge the Cultural Gap Existing in Popular AI Tools,” e27, accessed April 26, 2024, https://e27.co/how-sea-lion-aims-to-bridge-the-cultural-gap-existing-in-popular-ai-tools-20240131/.

40 Paresh Dave, “ChatGPT Is Cutting Non-English Languages Out of the AI Revolution,” Wired, accessed April 26, 2024, https://www.wired.com/story/chatgpt-non-english-languages-ai-revolution/.

41 “GPT-4V(Ision) System Card,” accessed April 29, 2024, https://openai.com/research/gpt-4v-system-card.

42 Henry Kronk, “What Is ‘Open’? Openwashing and the Half-Truths About Openness,” eLearningInside News (blog), January 1, 2018, https://news.elearninginside.com/open-openwashing-half-truths-openness/.

43 “Llama and ChatGPT Are Not Open-Source - IEEE Spectrum,” accessed April 26, 2024, https://spectrum.ieee.org/open-source-llm-not-open.

44 David Gray Widder, Sarah West, and Meredith Whittaker, “Open (For Business): Big Tech, Concentrated Power, and the Political Economy of Open AI,” SSRN Scholarly Paper (Rochester, NY, August 17, 2023), https://doi.org/10.2139/ssrn.4543807.

45 Widder, West, and Whittaker.

46 Steven J. Vaughan-Nichols, “Meta’s Llama 2 Is Not Open Source,” accessed April 26, 2024, https://www.theregister.com/2023/07/21/llama_is_not_open_source/.

47 David Gray Widder et al., “Limits and Possibilities for ‘Ethical AI’ in Open Source: A Study of Deepfakes,” in 2022 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’22: 2022 ACM Conference on Fairness, Accountability, and Transparency, Seoul Republic of Korea: ACM, 2022), 2035–46, https://doi.org/10.1145/3531146.3533779.

48 Dylan Patel, “How Nvidia’s CUDA Monopoly In Machine Learning Is Breaking - OpenAI Triton And PyTorch 2.0,” accessed April 29, 2024, https://www.semianalysis.com/p/nvidiaopenaitritonpytorch.

49 “AI for Good,” AI for Good (blog), accessed April 29, 2024, https://aiforgood.itu.int/about-ai-for-good/.

50 Amy Yeboah Quarkume, “ChatGPT: A Digital Djembe or a Virtual Trojan Horse? | Howard Magazine,” accessed April 29, 2024, https://magazine.howard.edu/stories/chatgpt-a-digital-djembe-or-a-virtual-trojan-horse.

51 Ananya, “AI Image Generators Often Give Racist and Sexist Results: Can They Be Fixed?,” Nature 627, no. 8005 (March 19, 2024): 722–25, https://doi.org/10.1038/d41586-024-00674-9; Leonardo Nicoletti and Dina Bass Technology + Equality, “Humans Are Biased. Generative AI Is Even Worse,” Bloomberg.Com, February 1, 2024, https://www.bloomberg.com/graphics/2023-generative-ai-bias/; Nitasha Tiku, Kevin Schaul, and Szu Yu Chen, “These Fake Images Reveal How AI Amplifies Our Worst Stereotypes,” Washington Post, accessed April 29, 2024, https://www.washingtonpost.com/technology/interactive/2023/ai-generated-images-bias-racism-sexism-stereotypes/.

52 Victoria Turk, “How AI Reduces the World to Stereotypes,” Rest of World, October 10, 2023, https://restofworld.org/2023/ai-image-stereotypes/.

53 Victoria Turk, “How AI Reduces the World to Stereotypes.”

54 See Ranjit Singh, “Ordinary Ethics of Governing AI,” Carnegie Endowment for International Peace, April 2024.

55 Ameya Paleja, “AI Rolled out in India Declares People Dead, Denies Food to Thousands,” Interesting Engineering, January 25, 2024, https://interestingengineering.com/culture/algorithms-deny-food-access-declare-thousands-dead.

56 Tapasya, Kumar Sambhav, and Divij Joshi, “How an Algorithm Denied Food to Thousands of Poor in India’s Telangana,” Al Jazeera, accessed April 29, 2024, https://www.aljazeera.com/economy/2024/1/24/how-an-algorithm-denied-food-to-thousands-of-poor-in-indias-telangana.

57 Shivani Kapania et al., “”Because AI Is 100% Right and Safe”: User Attitudes and Sources of AI Authority in India,” in Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems, CHI ’22 (New York, NY, USA: Association for Computing Machinery, 2022), 1–18, https://doi.org/10.1145/3491102.3517533.

58 Lakshmee Sharma, Kanimozhi Udhayakumar, and Sarayu Natarajan, “Last Mile Access to Urban Governance,” Aapti Institute, February 13, 2021, https://aapti.in/blog/last-mile-access-to-urban-governance/.

59 Rasina Musallimova, “Scientists Working on AI Tools That Speak African Languages,” Sputnik Africa, 20230821T1831 200AD, https://en.sputniknews.africa/20230821/scientists-working-on-ai-tools-that-speak-african-languages-1061493013.html; “Introduction to African Languages,” accessed April 29, 2024, https://alp.fas.harvard.edu/introduction-african-languages.

60 “Mozilla Common Voice,” accessed April 29, 2024, https://commonvoice.mozilla.org/; “Lacuna Fund,” Lacuna Fund, accessed April 29, 2024, https://lacunafund.org/.

61 Edel Rodriguez, “An MIT Technology Review Series: AI Colonialism,” MIT Technology Review, accessed April 29, 2024, https://www.technologyreview.com/supertopic/ai-colonialism-supertopic/.

62 Neema Iyer, “Digital Extractivism in Africa Mirrors Colonial Practices,” August 15, 2022, https://hai.stanford.edu/news/neema-iyer-digital-extractivism-africa-mirrors-colonial-practices.

63 Nancy Vollmer et al., “Does Interviewer Gender Influence a Mother’s Response to Household Surveys about Maternal and Child Health in Traditional Settings? A Qualitative Study in Bihar, India,” PLoS ONE 16, no. 6 (June 16, 2021): e0252120, https://doi.org/10.1371/journal.pone.0252120.

64 “Digital Platforms in Africa: The „Uberisation“ of Informal Work,” accessed April 29, 2024, https://www.giga-hamburg.de/en/publications/giga-focus/digital-platforms-in-africa-the-uberisation-of-informal-work.

65 Ujjwal Sehrawat et al., “The Everyday HCI of Uber Drivers in India: A Developing Country Perspective,” Proceedings of the ACM on Human-Computer Interaction 5, no. CSCW2 (October 18, 2021): 424:1-424:22, https://doi.org/10.1145/3479568.

66 Tom Huddlestone Jr., “Worried about the Economy? These 5 Successful Companies Were Started during the Great Recession,” CNBC, December 29, 2022, https://www.cnbc.com/2022/12/29/uber-to-venmo-successful-companies-started-during-great-recession.html.

67 Tarini Bedi, “Taxi Drivers, Infrastructures, and Urban Change in Globalizing Mumbai,” City & Society 28, no. 3 (2016): 387–410, https://doi.org/10.1111/ciso.12098; Julia K. Giddy, “Uber and Employment in the Global South – Not-so-Decent Work,” Tourism Geographies 24, no. 6–7 (November 10, 2022): 1022–39, https://doi.org/10.1080/14616688.2021.1931955.

68 Sambasivan et al., “Re-Imagining Algorithmic Fairness in India and Beyond.”

69 Upol Ehsan, Koustuv Saha, Munmun De Choudhury, and Mark O. Riedl, “Charting the Sociotechnical Gap in Explainable AI: A Framework to Address the Gap in Xai,” Proceedings of the ACM on Human-Computer Interaction, April 1, 2023, https://dl.acm.org/doi/abs/10.1145/3579467; and Chinasa T. Okolo, “Towards a Praxis for Intercultural Ethics in Explainable AI.” arXiv.org, April 25, 2023, https://arxiv.org/abs/2304.11861.

70 See Jake Okechukwu Effoduh, “A Global South Perspective on Explainable AI,” Carnegie Endowment for International Peace, April 2024. The author based these findings on local interviews they conducted with dairy farmers in Kiambu County, Kenya.

71 Carolina Botero, “Latin American AI Strategies Can Tackle Copyright as a Legal Risk for Researchers,” Carnegie Endowment for International Peace, April 30, 2024.

72 Botero.

73 Botero.

74 “A ‘Blatant No’ from a Copyright Holder Stops Vital Linguistic Research Work in Africa,” Walled Culture: A Journey Behind Copyright Bricks, May 16, 2023, https://walledculture.org/a-blatant-no-from-a-copyright-holder-stops-vital-linguistic-research-work-in-africa/.

75 “A ‘Blatant No’ from a Copyright Holder Stops Vital Linguistic Research Work in Africa.”

76 Leo Gao et al., “The Pile: An 800GB Dataset of Diverse Text for Language Modeling” (arXiv, December 31, 2020), https://doi.org/10.48550/arXiv.2101.00027.

77 Angella Ndaka and Geci Karuri-Sebina, “Whose Agenda Is Being Served by the Dynamics around AI African Data Extraction?,” Daily Maverick, April 22, 2024, https://www.dailymaverick.co.za/article/2024-04-22-whose-agenda-is-being-served-by-the-dynamics-surrounding-ai-african-data-extraction/.

78 Rodriguez, “An MIT Technology Review Series.”

79 Robert Phillipson, “Linguistic Imperialism / R. Phillipson.,” December 14, 2018, https://doi.org/10.1002/9781405198431.wbeal0718.pub2.

80 Rohit Inani, “Language Is a ‘War Zone’: A Conversation With Ngũgĩ Wa Thiong’o,” March 9, 2018, https://www.thenation.com/article/archive/language-is-a-war-zone-a-conversation-with-ngugi-wa-thiongo/.

81 Rodriguez, “An MIT Technology Review Series.”

82 Keoni Mahelona et al., “OpenAI’s Whisper Is Another Case Study in Colonisation,” Papa Reo, January 24, 2023, https://blog.papareo.nz/whisper-is-another-case-study-in-colonisation/.

83 See Tahu Kukutai and Donna Cormack, in a forthcoming piece by the Carnegie Endowment for International Peace, 2024.

84 See Angeline Wairegi, in a forthcoming piece from the Carnegie Endowment for International Peace, 2024.

85 See Chijioke Okorie and Vukosi Marivate, “How African NLP Experts Are Navigating the Challenges of Copyright, Innovation, and Access,” Carnegie Endowment for International Peace, April 2024.

86 The White House, “Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence,” The White House, October 30, 2023, https://www.whitehouse.gov/briefing-room/presidential-actions/2023/10/30/executive-order-on-the-safe-secure-and-trustworthy-development-and-use-of-artificial-intelligence/.

87 “Statement from Vice President Harris on the UN General Assembly Resolution on Artificial Intelligence,” The White House, March 21, 2024, https://www.whitehouse.gov/briefing-room/statements-releases/2024/03/21/statement-from-vice-president-harris-on-the-un-general-assembly-resolution-on-artificial-intelligence/.

88 “FTC’s Lina Khan Warns Big Tech over AI | Stanford Institute for Economic Policy Research (SIEPR),” November 3, 2023, https://siepr.stanford.edu/news/ftcs-lina-khan-warns-big-tech-over-ai.

89 “Guidingprinciplesbusinesshr_en.Pdf,” accessed April 29, 2024, https://www.ohchr.org/sites/default/files/documents/publications/guidingprinciplesbusinesshr_en.pdf.

90 See Jun-E Tan and Rachel Gong, “The Plight of Platform Workers Under Algorithmic Management in Southeast Asia,” Carnegie Endowment for International Peace, April 2024.

91 Amy Mahn, “NIST’s International Cybersecurity and Privacy Engagement Update – International Dialogues, Workshops, and Translations,” NIST, February 8, 2024, https://www.nist.gov/blogs/cybersecurity-insights/nists-international-cybersecurity-and-privacy-engagement-update.

92 “Raising Standards: Ethical Artificial Intelligence and Data for Inclusive Development in Southeast Asia | Asia Society,” accessed April 29, 2024, https://asiasociety.org/policy-institute/raising-standards-data-ai-southeast-asia.

93 “Raising Standards.”

94 “OECD Guidelines for Multinational Enterprises on Responsible Business Conduct | En | OECD,” accessed April 29, 2024, https://www.oecd.org/publications/oecd-guidelines-for-multinational-enterprises-on-responsible-business-conduct-81f92357-en.htm; “FTC Signs on to Multilateral Arrangement to Bolster Cooperation on Privacy and Data Security Enforcement,” Federal Trade Commission, January 17, 2024, https://www.ftc.gov/news-events/news/press-releases/2024/01/ftc-signs-multilateral-arrangement-bolster-cooperation-privacy-data-security-enforcement.

95 “South-South and Triangular Cooperation – UNOSSC,” accessed April 29, 2024, https://unsouthsouth.org/about/about-sstc/.

96 Abdul Muheet Chowdhary and Sebastien Babou Diasso, “Taxing Big Tech: Policy Options for Developing Countries,” State of Big Tech, August 25, 2022, https://projects.itforchange.net/state-of-big-tech/taxing-big-tech-policy-options-for-developing-countries/.

97 Daniel Bunn, “Testimony: The OECD’s Pillar One Project and the Future of Digital Services Taxes,” Tax Foundation, March 7, 2024, https://taxfoundation.org/research/all/federal/pillar-one-digital-services-taxes/.

98 Benjamin Guggenheim, “POLITICO Pro: U.N. Votes to Forge Ahead on Global Tax Effort in Challenge to OECD,” November 22, 2023, https://subscriber.politicopro.com/article/2023/11/u-n-votes-to-forge-ahead-on-global-tax-effort-in-challenge-to-oecd-00128495; José Antonio Ocampo, “Finishing the Job of Global Tax Cooperation | by José Antonio Ocampo,” Project Syndicate, April 29, 2024, https://www.project-syndicate.org/commentary/global-convention-tax-cooperation-what-it-should-include-by-jose-antonio-ocampo-2024-04.

99 Leigh Thomas, “UN Vote Challenges OECD Global Tax Leadership | Reuters,” accessed April 29, 2024, https://www.reuters.com/world/un-vote-challenges-oecd-global-tax-leadership-2023-11-23/.

100 “Internet Impact Brief: 2021 Indian Intermediary Guidelines and the Internet Experience in India,” Internet Society (blog), accessed April 29, 2024, https://www.internetsociety.org/resources/2021/internet-impact-brief-2021-indian-intermediary-guidelines-and-the-internet-experience-in-india/.

101 “BNamericas - How Latin America Contributes to the Busines...,” BNamericas.com, accessed April 29, 2024, https://www.bnamericas.com/en/analysis/how-latin-america-contributes-to-the-business-of-the-3-big-tech-players; Amit Anand, “Investors Are Doubling down on Southeast Asia’s Digital Economy,” TechCrunch (blog), September 9, 2021, https://techcrunch.com/2021/09/09/investors-are-doubling-down-on-southeast-asias-digital-economy/; “Big Tech Benefits but Does Not Contribute to Digital Development in the Caribbean,” StMaartenNews.Com - News Views Reviews & Interviews (blog), February 1, 2024, https://stmaartennews.com/telecommunications/big-tech-benefits-but-does-not-contribute-to-digital-development-in-the-caribbean/; Tevin Tafese, “Digital Africa: How Big Tech and African Startups Are Reshaping the Continent,” accessed April 29, 2024, https://www.giga-hamburg.de/en/publications/giga-focus/digital-africa-how-big-tech-and-african-startups-are-reshaping-the-continent.

102 “Global Partnership on Artificial Intelligence - GPAI,” accessed April 29, 2024, https://gpai.ai/.

103 “GDC-submission_Southern_Alliance.Pdf,” accessed April 29, 2024, https://www.un.org/techenvoy/sites/www.un.org.techenvoy/files/GDC-submission_Southern_Alliance.pdf.

104 “AI Reporting Grants,” Pulitzer Center, accessed April 29, 2024, https://pulitzercenter.org/grants-fellowships/opportunities-journalists/ai-reporting-grants.

105 Ken Opalo, “Academic Research and Policy Research Are Two Different Things,” January 8, 2024, https://www.africanistperspective.com/p/there-is-a-lot-more-to-policy-research.

106 Opalo.

107 “Microsoft Africa Research Institute (MARI),” Microsoft Research (blog), accessed April 29, 2024, https://www.microsoft.com/en-us/research/group/microsoft-africa-research-institute-mari/; “Microsoft Research Lab - India,” Microsoft Research (blog), April 5, 2024, https://www.microsoft.com/en-us/research/lab/microsoft-research-india/.

108 Jan Krewer, “Creating Community-Driven Datasets: Insights from Mozilla Common Voice Activities in East Africa,” Mozilla Foundation, accessed April 29, 2024, https://foundation.mozilla.org/en/research/library/creating-community-driven-datasets-insights-from-mozilla-common-voice-activities-in-east-africa/.

109 See Tahu Kukutai and Donna Cormack, in a forthcoming piece from the Carnegie Endowment for International Peace, 2024.

110 “South-South and Triangular Cooperation – UNOSSC.”

111 See Carolina Botero, “Latin American AI Strategies Can Tackle Copyright as a Legal Risk for Researchers,” Carnegie Endowment for International Peace, April 2024.

Former Nonresident Scholar, Technology and International Affairs Program

Aubra Anthony was a nonresident scholar in the Technology and International Affairs Program at Carnegie, where she researches the human impacts of digital technology, specifically in emerging markets.

Senior Research Analyst, Technology and International Affairs

Lakshmee Sharma is a senior research analyst in the Technology and International Affairs Program at Carnegie, where she focuses on the social, political, and economic impacts of digital technology. With an international development perspective, she explores technology adoption for equitable inclusion.

Nonresident Scholar, Asia Program

Elina Noor is a nonresident scholar in the Asia Program at Carnegie where she focuses on developments in Southeast Asia, particularly the impact and implications of technology in reshaping power dynamics, governance, and nation-building in the region.

Carnegie does not take institutional positions on public policy issues; the views represented herein are those of the author(s) and do not necessarily reflect the views of Carnegie, its staff, or its trustees.

The coming decade will require technology companies to decide how nuclear fits into their energy strategies—and grapple with the obligations that follow.

John Pendleton, Mackenzie Schuessler

The ASML MoU with Tata Electronics is an indicator of how far the Indian semiconductor ecosystem has come. This ecosystem has been years in the making and represents real commercial logic.

Konark Bhandari

This paper examines the relationship between India’s evolving space policy and the corresponding growth in private space ventures. It analyzes both the enabling factors created by recent regulatory changes and the persistent challenges facing entrepreneurs in this capital-intensive, highly regulated industry.

Harshan Vazhakunnam

Beijing’s AI diplomacy is pivoting from infrastructure and associated technical standards toward a more comprehensive effort aimed at recrafting global norms and institutions of AI governance.

Arindrajit Basu

Democratic institutions currently lack the capacity needed to govern AI-augmented deliberation in ways that serve democratic imperatives.

Micah Weinberg