Summary

Artificial intelligence (AI) is expected to play a major role in shaping global competitiveness and productivity over the next couple of decades, granting early adopters significant societal, economic, and strategic advantages. As the pace of AI innovation and development picks up—underpinned by advancements in big data and high-performance computing—the United States and China are both in the driver’s seat. Europe, meanwhile, despite having certain advantages such as a strong industrial base and leading AI research and talent, is punching far below its weight. This state of affairs is especially due to the fragmentation of the EU’s digital market, difficulties in attracting human capital and external investment, and the lack of commercial competitiveness.

Fortunately, in recent years, European leaders have recognized the importance of not lagging behind on AI and have sought to raise their ambitions. Leaders such as German Chancellor Angela Merkel and French President Emmanuel Macron have stressed the need for Europe to become a leading global player on AI, and the new European Commission has made AI a top priority for the next five years. By declaring AI a major strategic priority, several member states and EU institutions are taking steps to advance the continent’s ambitions for AI leadership. This includes rolling out devoted national and EU-level AI strategy documents, boosting research and innovation, and exploring new regulatory approaches for managing the development and use of AI.

Central to the EU’s efforts is the notion of AI that is “made in Europe,” that pays attention to ethical and human-centric considerations, and that is in line with core human rights values and democratic principles. Given the need to address the societal, ethical, and regulatory challenges posed by AI, the EU’s stated added value is in leveraging its robust regulatory and market power—the so-called “Brussels effect”—into a competitive edge under the banner of “trustworthy AI.” Designed to alleviate potential harm as well as to permit accountability and oversight, this vision for AI-enabled technologies could set Europe apart from its global competitors. It can also serve as a key component of increasing the EU’s digital sovereignty by ensuring that European users have more choice and control.

Yet normative principles and regulation alone are not enough for the EU to become a global AI leader. What is also required is a reevaluation of European competitiveness in this field in a way that leverages its comparative advantages and preserves its interests in a world where technology is increasingly emerging as a key driver of great-power rivalry. Amid concerns that Europe is losing ground to the United States and China, EU member states should acknowledge that the amount of resources required to keep up with the latest AI developments cannot be met by going it alone. There is a clear rationale for a stronger EU-level role and for a more coherent European-wide approach to AI that complements member states’ own actions.

This paper takes stock of whether existing EU and national strategies and funding initiatives are sufficient for Europe to be able to seize the opportunities afforded by AI. It argues that for its AI ecosystem to thrive, Europe needs to find a way to protect its research base, encourage governments to be early adopters, foster its startup ecosystem, expand international links, and develop AI technologies as well as leverage their use efficiently. More importantly, to be able to take these steps, Europe needs to catch up on digitizing its economies and complete the establishment of the digital single market once and for all.

Recommendations:

There are a host of steps that the EU and its member states can pursue to bolster Europe’s approach to AI. Specifically, this array of European stakeholders can:

- complete the EU digital single market;

- balance technological sovereignty with global supply chains;

- lead on standard setting and regulations;

- secure citizens’ trust in AI applications;

- promote a vibrant Europe-wide AI ecosystem;

- align the national AI strategies of EU member states;

- safeguard dual-use technologies;

- ensure close EU-UK cooperation on AI;

- enhance transatlantic dialogue on AI;

- engage global stakeholders on ethical AI; and

- consider AI a facet of European strategic autonomy.

Introduction

Artificial intelligence (AI) is expected to transform economies and impact virtually every aspect of human life over the next couple of decades.1 This disruptive potential has triggered rapidly growing investments in AI research and development (R&D) as well as speedy uptake in the public and private sectors worldwide. By 2030, AI could contribute as much as $13 trillion to the global economy, a figure that approaches the current annual economic output of China, the world’s second-largest economy.2 Moreover, as AI applications are expanding to a wide range of sectors, early adopters will be well positioned to reap significant economic and strategic benefits. While the hyped notion of an AI arms race is too simplistic to capture the complex dynamics of the global digital ecosystem, its continued use also risks further exacerbating global competition in this key strategic domain. The combination of potentially large economic, societal, and military dividends has propelled countries to join this so-called race and swiftly and effectively apply AI in as many sectors as possible.

The United States and China are ahead in the AI competition, although countries such as Israel, Russia, Singapore, and South Korea are investing heavily in AI as a strategic priority.3 Some European countries—such as France, Germany, Sweden, and the United Kingdom (UK)—are also at the forefront of the field, but they cannot necessarily compete globally alone. Europe as a whole is punching far below its weight. This reality is especially due to the fragmentation of the EU’s digital market, difficulties with attracting human capital and external investment from outside of Europe, and a lack of commercial competitiveness. While its policymakers frequently acknowledge the risks of lagging behind, Europe has some advantages, such as its strong industrial base and leading AI research and talent, that it can leverage to better compete globally.

European governments and EU institutions are taking steps to upgrade the continent’s ambitions for AI leadership. Countries are rolling out dedicated AI national strategies and digital approaches. The European Commission has emerged as a key driver and agenda setter for a more coherent approach to AI in Europe.4 Its plan, set out in the white paper on AI released in February 2020, is to further boost the EU’s research and innovation as well as its technological and industrial capabilities. The EU argues its added value is in leveraging its strong regulatory and market power into a competitive edge under the unique selling proposition of “trustworthy AI” that is “made in Europe.”5 This approach is characterized by transparency, diversity, and fairness, and it is designed to alleviate potential harm as well as to permit accountability and oversight, thus safeguarding social and environmental well-being. Central to the EU’s efforts in the digital space is also a strong desire to be more self-sufficient. Commissioner for Internal Market and Services Thierry Breton, in laying out his vision for the EU, said: “we have to work on our technological sovereignty.”6

One particular area where the EU has the potential to be instrumental is in shaping the global normative agenda on a “human-centric” approach to AI.7 The aim is to set up a framework for an ethics-driven and trustworthy development of AI technology and applications in line with European values as well as to prepare the groundwork for a global alliance in this domain. Although it has been criticized for focusing too much on legal and ethical guidelines, the EU sees itself as having a first-mover advantage as a regulatory powerhouse when it comes to ethical AI by setting the stage for global standards of design and usability and for ensuring legal clarity in AI-based applications. As shown by the General Data Protection Regulation (GDPR), the EU’s strategic edge primarily resides in its market, normative, and regulatory powers—what has been described as the “Brussels effect,”—though the digital single market is admittedly still a work in progress.8 However, while the European Commission’s digital sovereignty agenda may help advance certain AI developments in Europe, it is equally essential that the EU works closely with like-minded global partners on setting joint AI standards and regulations.

Yet regulations alone are not sufficient. The window of opportunity for consolidating a distinctive European approach to AI on the international stage is closing fast. What is needed is a reevaluation of European competitiveness in this field. Furthermore, Europe must critically assess and demonstrate foresight to take stock of the options it has at its disposal to shape AI in a way that leverages its comparative advantages and preserves its interests, especially in a world where technology is increasingly emerging as a key aspect of great-power competition, particularly between the United States and China. Fortunately, European leaders seem to recognize that more action is needed and have recently taken steps to upgrade Europe’s digital ecosystem, though it is still too early to see what these actions will amount to. France, Germany, and other EU member states have subsidized and tried to create or favor national champions in the tech and telecommunications sector for decades, usually with little impact.

This paper examines the national strategies of major European countries and significant EU initiatives in AI, with special attention to Europe’s leadership potential and capacity to combine its technological and industrial strengths with its agenda-setting and regulatory power. Though some municipal authorities in Europe are discussing proposals to regulate or limit the use of facial recognition and other AI-related technologies,9 the focus here is at the national and EU levels rather than the sub-national one. The paper starts by assessing Europe’s role in the global AI competition. It compares the national AI strategies of various European actors (including Czechia, Estonia, Finland, France, Germany, Sweden, and the United Kingdom) and discusses government efforts to shore up Europe’s leadership position on AI. It then outlines and evaluates the EU’s distinctive approach and recent AI initiatives and goes on to explore strategic considerations facing European policymakers in a more competitive global environment. Finally, the paper puts forward recommendations toward a more coherent European AI strategy.

Europe’s Place in the Global AI Competition

The global competition in AI is fierce, between companies as well as states. Countries’ competitiveness can be measured in terms of market share, investment, and innovation prowess, as well as the strength of regulatory and ethical frameworks.

Market Share

When it comes to the global AI startup ecosystem, a study from 2018 noted that the top three players (measured in terms of number of AI startups) are the United States with 1,393 startups (40 percent), China with 383 startups (11 percent), and Israel with 362 startups (11 percent).10 Four European countries are among the top ten (the UK is in fourth place, France is in seventh, Germany is in eighth, and Sweden is in tenth). Collectively, though, Europe is second only to the United States, with 769 AI startups (22 percent of the global total). This shows that, while single European countries may not be globally competitive, Europe has the potential to be a major player in AI if it can strengthen its digital single market, though Brexit will have long-term consequences on such efforts.

Europe also lags in AI-related patent applications, though its filings for technologies related to the Internet of Things and the Fourth Industrial Revolution grew by 54 percent in 2016, driven mainly by increases in the UK, Germany, and France.11 The United States holds the most AI patent applications, receives the majority (66 percent) of AI-related private investment globally, and is home to the world’s highest-valued digital players (Google, Apple, Facebook, IBM, Microsoft, and Amazon).12 It therefore has a superior foundation for developing and implementing AI applications. But between 2013 and 2017, the number of patents in deep learning and AI published in China grew at a much faster rate than those in the United States.13 In 2017, China obtained 641 patents related to AI, compared with 130 in the United States.14

Moreover, Europe’s information technology assets are scattered across different countries. This is precisely why the establishment of the digital single market is considered so crucial for the EU’s global competitiveness. Fully completed, this would make the EU one of the largest and most valuable digital markets in the world. But the union needs to remove the remaining barriers to cross-border data flows and 5G networks, two tasks it is currently lagging behind on.15 The most recent factsheet from the European Commission from February 2019 stated that the European Parliament, the Council of the EU, and the European Commission had agreed on twenty-eight of the thirty legislative initiatives initially presented as part of the digital single market strategy launched in 2015.16 There are pending legislative initiatives that the European Parliament and the Council of the EU still need to agree on. The most important ones include the regulation of the cross-border portability of online content (geoblocking),17 the regulation of the free flow of nonpersonal data in the EU,18 and a regulation establishing a European High Performance Computing Joint Undertaking, which will pool EU and national funding to develop a pan-European infrastructure for supercomputing and to support related research and innovation activities.19

The European Commission has also taken nonlegislative steps to advance the digital single market strategy, including the Digital Education Action Plan,20 (which includes eleven actions to support the development of technology use and digital competence in education); the High Level Expert Group on AI (see below); and the Fintech Action Plan,21 (which includes initiatives to establish a more competitive and innovative financial market). The recent white paper on AI also proposes creating a “lighthouse center of research, innovation and expertise”;22 encouraging the flourishing of the European AI research community; and building world-class testing and experimentation sites across Europe. The continent is already home to one of the world’s largest nonprofit contract research institutes for software technology based on AI methods, an institute called the German Research Center for Artificial Intelligence.23 Founded in 1988, it has become Germany’s leading research center on innovative AI commercial software technology. On the same day the white paper was released, the European Commission also proposed a data strategy to promote a single data market and a European alternative for cloud-based services. While efforts to strengthen the digital single market are progressing, Brexit means a key European country is taking a different direction. With London being Europe’s most important AI hub, with 1,000 AI companies, thirty-five tech hubs, and reputed research centers such as the Alan Turing Institute,24 the loss of the UK could slow Europe’s collective progress on AI.

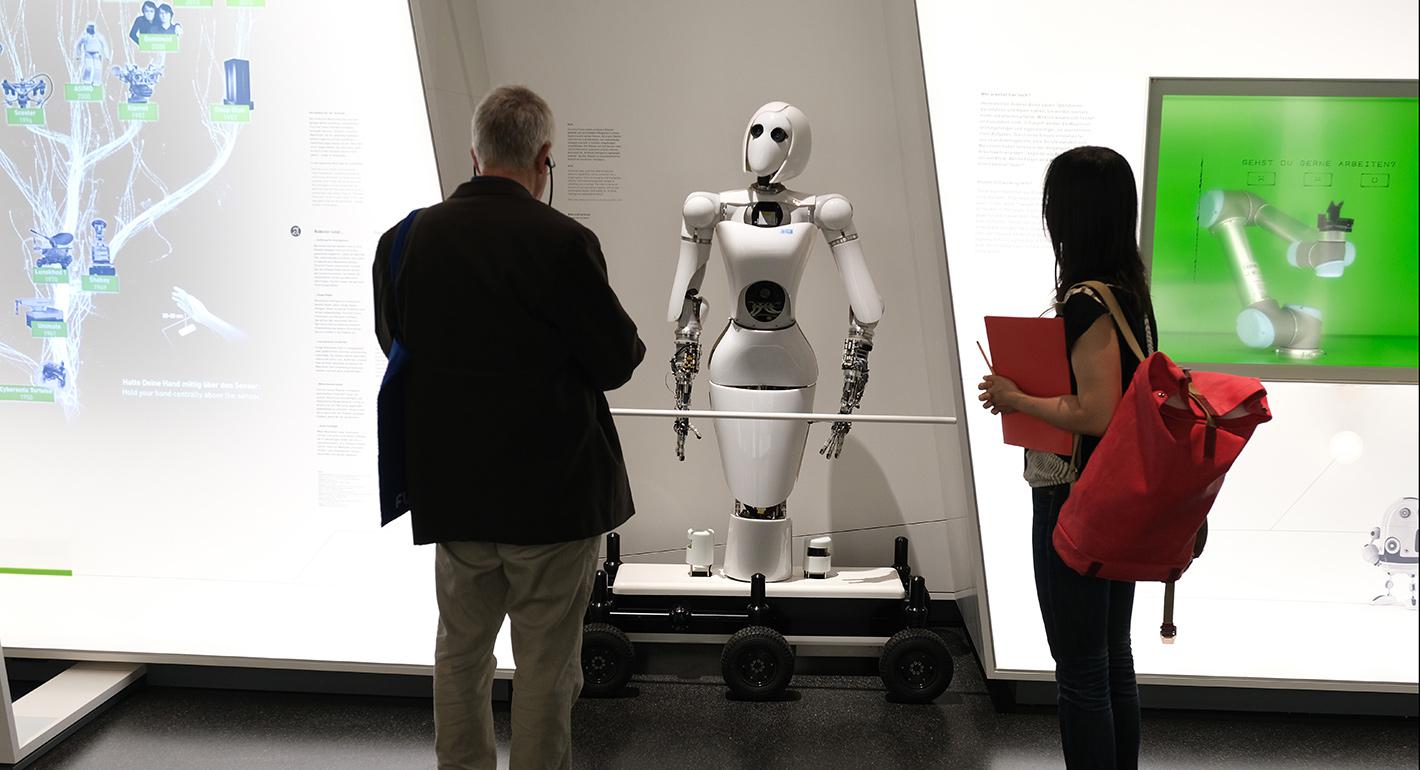

Translating basic research into applied research and innovation in the civil and defense sectors in Europe is another issue to be addressed.25 Continued advancement in AI requires collaboration between industry, academia, and government as well as the industrywide development of solutions. These are things that Europe is traditionally quite good at and that, combined with its strength in basic research, could provide a strategic advantage. European companies are also ahead of Chinese and American ones in the adoption of robotic process automation.26 Moreover, there are plenty of AI companies in Europe so establishing compliance regimes for them, as part of the European Commission’s ethical guidelines, could help spread EU norms on AI development and use.

Finally, European AI researchers enjoy excellent scientific standing, though as many AI journal and conference papers are published per year in China as in Europe.27 To some extent, the strength of cross-border academic research teams in Europe creates opportunities for cross-pollination with industry and government actors. Moreover, distributed but cooperative research clusters across Europe have the potential to help cross-pollination, and smaller initiatives are already laying the groundwork (including, for example, the Nordic AI Artificial Intelligence Institute,28 the Benelux Association for Artificial Intelligence,29 and the Robot Technology Transfer Network or ROBOTT-NET).30 There have been attempts to forge pan-European research networks such as the Confederation of Laboratories for Artificial Intelligence Research in Europe (CLAIRE),31 supported by the European Association for Artificial Intelligence, and the AI4EU consortium supported by the European Commission’s Horizon 2020 program.32 As identified by the recent white paper on AI, opportunities for further cooperation between member states to capitalize on each other’s strengths, as well as the advantages of cross-border and sectoral networks of excellence, could help Europe better exploit its existing potential on AI.

External Investment and Innovation Prowess

When it comes to investment, the United States and China are also ahead of Europe. The United States is by far the leader in AI-related investment and venture capital. Whereas its academic institutions conduct the majority of basic research, the private sector is very active in applying research done across the country and elsewhere. These academic and private-sector players are also exceptionally good at attracting top global talent.33 Meanwhile, Chinese AI startups benefit from close ties with the government, which give them access to huge amounts of public-sector funding and early-adopter institutions. For example, in the domain of facial recognition, the company CloudWalk received a $301 million grant from the Guangzhou Municipal Government in 2017,34 while Megvii raised $460 million in a funding round led by the central government’s venture capital fund.35

Despite a steady increase since 2008, Europe still lags behind the United States and China in private investment in AI. In 2016, Europe devoted only between 2.4–3.2 billion euros in investment funds, whereas Asia invested 6.5–9.7 billion euros and North America invested 12.1–18.6 billion euros.36 Private equity and venture capital firms have accounted for 75 percent of AI-related deal volume in Europe in the last ten years.37 Though reported amounts are difficult to compare because analysts use different definitions of AI, the UK, France, and Germany attracted the lion’s share of investment in AI companies over the past decade, evidence of a highly uneven European landscape.38 Moreover, while most AI-related investments in Europe are from European sources, many of the continent’s most successful digital companies—such as Skype (Estonia/Sweden),39 Shazam (UK),40 and Momondo (UK),41 among others—have been acquired by American or Chinese tech giants, making Europe a net importer of digital services despite its significant levels of innovation and many digital startups. The foreign-investment screening framework that the EU agreed to in April 2019, which seeks to protect critical technologies, could restrict Chinese investments into European AI startups.42

Innovation is paramount for the EU’s ability to remain competitive. Launched by the European Commission in October 2010, the Innovation Union—one of the seven flagship initiatives of the Europe 2020 strategy—aims to improve conditions and access to finance for research and innovation.43 As far as R&D is concerned, Europe spends 0.8 percent of gross domestic product (GDP) less every year than the United States and 1.5 percent of GDP less than Japan.44 Its performance in innovation continues to improve.45 According to the 2019 edition of the European Innovation Scoreboard, the performance of twenty-four EU countries improved compared to the previous year, and the progress of lower-performing countries has accelerated compared to higher-performing countries. Since 2011, the EU’s average innovation performance has increased by nearly nine percentage points, and it even surpassed that of the United States for the first time in 2019. Within the EU, innovation performance has increased in twenty-five countries since 2011 with Sweden being the EU’s innovation leader in 2019, followed by Finland, Denmark, and the Netherlands.46 Lithuania, Greece, Latvia, Malta, the UK, Estonia, and the Netherlands are the fastest-growing innovators.47

But China is catching up fast with a growth rate for innovation performance three times that of the EU, while Canada, Australia, and Japan all perform better than the EU.48 In China, industrial investments in R&D grew by 20 percent between 2017 and 2018, compared to 8 percent in the EU and 9 percent in the United States.49 However, the EU is home to only 33 unicorns, startups valued at over $1 billion, compared to 151 in the United States and 83 in China.50 Europe also has fewer young leading innovators. Europe’s private research, development, and innovation investments are lagging behind, representing 1.3 percent of EU GDP compared to 1.6 percent in China, 2 percent in the United States, 2.6 percent in Japan, and 3.3 percent in South Korea.51 R&D intensity—that is, gross expenditures on R&D as a percentage of GDP—can also provide insights into what innovation objectives governments are pursuing In 2018, the United States, Japan, and South Korea had the largest increases in R&D intensity. In the EU, gross R&D expenditures exceeded 2 percent for the first time, mainly due to positive trends in Germany, the UK, and Poland.52

Overall, in the context of an accelerating global drive for innovation, the EU must reinforce and implement its research, development, and innovation policy; maintain excellence in key enabling and dual-use technologies; and develop a European approach for critical technology infrastructure across the continent to sustain efforts to scale up and diffuse the use of technology.

Regulatory and Ethical Frameworks

When it comes to regulatory and ethical approaches, the EU fares better than the United States and China. Although the U.S. approach to AI has lacked an overall governmental strategy until recently, emphasizing the benefits of private sector innovation while keeping the government out of the way is not a new phenomenon for the country. Former president Barack Obama’s administration released two reports on AI: “The Future of Artificial Intelligence” and “AI, Automation and the Economy.”53 It also released three landmark studies on big data in 2014, 2015, and 2016.54 More recently, the administration of President Donald Trump has begun to shape its own approach with the launch of the American AI Initiative.55 The relevant executive order instructs the U.S. government to develop a plan to extend the United States’ lead in AI. The document aims to boost investment in AI research, standard setting, workforce training, and outreach to allies. However, while making clear that AI is a major priority, these policies have left some questions of implementation and the allocation of resources unaddressed. Congress has recently discussed new proposals to fund a national AI strategy.56

Moreover, the executive order and subsequent official documents have a heavy focus on protecting the United States’ AI advantage, and if this motive is accompanied by restrictions on cross-border flows of data, capital, talent, and know-how, it may hurt the country in the long run. Likewise, the Trump administration’s recent visa restrictions on foreign tech workers in the United States could also undermine an important source of U.S. competitiveness. In February 2020, the White House reportedly aimed to double the AI research budget: the 2021 budget proposal would increase nondefense AI R&D funding to nearly $2 billion and that for quantum computing to about $860 million over the next two years.57 In January 2020, the administration unveiled ten AI principles for federal government agencies to consider when drafting rules and laws for the use of AI in the private sector.58

These principles are informed by three main goals: to ensure public engagement; limit regulatory overreach; and promote trustworthy AI that is fair, transparent, and safe. As the deputy chief technology officer at the White House Office of Science and Technology, Lynne Parker, explained, the principles are broadly defined to allow each government agency to create more specific regulations tailored to its sector.59 The White House is attempting to address concerns over the ethics and oversight of AI without hampering innovation. The White House also launched a series of public consultations before devising a plan on how to implement the principles.60 Although the principles serve to guide federal agencies on how to devise rules on the use of AI, the administration makes it clear that the key concern is limiting regulatory overreach and avoiding overregulation, a concern it has voiced to its European allies in regard to the European Commission’s AI white paper.61

China’s ambition is to become the world leader in AI as part of the government’s Made in China 2025 initiative. Beijing believes that AI technology is key to future global military and economic competition.62 In July 2017, the Ministry of Industry and Information Technology published its “Next Generation Artificial Intelligence Development Plan,”63 which sets clear targets: to reach the same level in AI as the United States by 2020, to become the world’s premier AI innovation center by 2030, and to build a domestic AI industry worth 150 billion renminbi ($22.2 billion) by 2020 and 400 billion renminbi ($59.1 billion) by 2025.64

The China Electronics Standardization Institute, under the Ministry of Industry and Information Technology, is one of the key players in the country, having launched in January 2018 the “Artificial Intelligence Standardization White Paper,” which outlines the country’s national AI standardization framework and plan for AI capability development.65 Since the release of the white paper, the institute has been actively engaged in developing relevant international standards, including as an active member of the subcommittee of the International Organization for Standardization, which develops international standards for the AI industry.66 Chinese AI products are becoming harder to export as Western scrutiny about data privacy standards and security risks grows (as seen in the recent concerns about Huawei and 5G). Overall, however, China’s AI strategy has been called the most comprehensive and most ambitious in the world.67

The country is also seeking to promote international partnerships on AI, as part of its efforts to set norms in this field and export its government-surveillance practices to other countries. As part of its Digital Silk Road, Beijing is investing heavily in digital infrastructure and in other countries to spread its approach to AI, which Beijing hopes will move these countries closer to its own governance model and make them more dependent on China. A related worrisome development is China’s testing and use of AI for censorship, repression, and extensive surveillance through initiatives like its pilot social credit systems.68 On top of this, Beijing is paying significant attention to the role of AI in national security in the belief that integrating AI into military technologies can allow China to overtake the United States in military supremacy.69

The EU’s white paper on AI and any future legislation are likely to influence the global regulatory debate. Here the EU’s experience with data privacy regulations such as the GDPR can serve an example of the Brussels effect by establishing global norms for emerging technologies. The GDPR seeks to incentivize companies to find innovative solutions for processing data within its legal remit. The principle of accountability enshrined in the GDPR aims to foster data accuracy; it implies increasing trust in the source of such data and the reliability of results based on this data. Ultimately, the EU’s focus on protecting user privacy could be an asset if it enables European innovators to build AI applications that are more consumer friendly. Since regulating AI also requires other regulations such as data protection and content moderation as preconditions, the EU is rather well positioned on this front.

Europeans could be more readily accepting of AI technologies that respect fundamental rights and consumer rights, and the use of such technologies might rapidly emerge as global standards materialize, granting Europe a first-mover advantage. This is especially the case when it comes to what the white paper identifies as high-risk AI applications (such as uses related to healthcare, transport, energy, and the public sector). Moreover, efforts to build trust (via the GDPR or future AI legislation) are important, but since the implications of advanced AI are not yet clear, neither are the policy measures that will be needed to properly address risks. Depending on how it is interpreted and enforced,70 the GDPR could become a unique comparative advantage for the EU when it comes to making good on its ambitions to become a leader in “trustworthy AI.”71

AI has the potential to have significantly negative impacts on societies globally. Concerns about technologically driven or exacerbated unemployment are quite real in many countries, and rising technology-induced displacement of jobs could contribute to increased support for populist parties and movements, which could destabilize democracies around the world. The EU’s focus on responsible and safe AI early on could give it an edge when it comes to setting ethical and regulatory standards. The EU’s approach would not regulate the technology as such, nor its applications, but rather would help guide how the applications are developed and deployed. By reporting, monitoring, and analyzing the progress of AI, the EU could position itself to define quality, build alliances with like-minded partners, and lead multilateral initiatives.

In sum, Europe is behind the United States and China on many of the key dimensions of AI competitiveness but ahead on regulatory approaches. A viable European strategy for competing would therefore include redoubled efforts to catch up when it comes to AI research and innovation, investment, data, and adoption, while simultaneously seeking to leverage Europe’s first-mover advantage on establishing regulatory frameworks pertaining to AI development and use.

National European Efforts on AI

European policymakers at the EU and national levels appear to recognize the importance of AI. For example, in November 2018, Chancellor Angela Merkel approved Germany’s 3 billion euro plan for AI, stating that “[t]oday, Germany cannot claim to be among the world leaders in artificial intelligence. Our aspiration is to make ‘Made in Germany’ a trademark also in artificial intelligence, and to ensure that Germany takes its place as one of the leading [AI] countries in the world.”72 Similarly, French President Emmanuel Macron has emphasized the importance of AI for France and the EU. In March 2018, he said: “I think artificial intelligence will disrupt all the different business models and it’s the next disruption to come. So I want to be part of it. Otherwise I will just be subjected to this disruption without creating jobs in this country.”73 Other European leaders have also increasingly expressed interest in AI and the need for European competitiveness in this field. These comments demonstrate a rising sense of urgency across European capitals around the importance of AI and for Europe to play a leading role in the digital age.

They also reflect a growing realization that more digital sovereignty is a prerequisite for great-power status and that such status is becoming even more strategically important as new and emerging technologies such as AI could concentrate economic power in extraordinary ways. Compared to previous industrial revolutions that harnessed the powers of steam, electricity, and computers, the Fourth Industrial Revolution is unique primarily due to the unprecedented scale, fast convergence, and yet-to-be discovered impact of emerging technological breakthroughs (including AI and especially the subfield of machine learning.) From this perspective, economic and political power will evolve in major ways, potentially shifting power toward a few powerful digital monopolies and tech giants while also enabling new and disruptive companies to quickly rise.

To mitigate this wave of transformations, several European countries—notably Czechia, Estonia, Finland, France, Germany, Sweden, and the UK—have developed AI strategies, with others planning to do so in the near future (see table 1). To a varying degree, these strategies outline specific actions, commit significant amounts of money to AI development, and seek to uphold European values and advance AI in an ethical manner that clearly benefits society.74 The following analysis of national approaches to AI encompasses four dimensions: government investment, private-sector innovation, the public-private AI ecosystem, and regulations and ethics.

| Table 1. National Approaches to AI in Europe |

| Date | Country | AI Deliverable/Action Plan |

| December 18, 2017 | Finland | Publication of a national AI strategy |

| March 6, 2018 | UK | Launch of “Sector Deal for AI” report |

| March 2018 | Italy | Publication of “AI at the Service of Citizens” white paper |

| March 29, 2018 | France | Publication of a national AI Strategy (the Villani report) |

| May 16, 2018 | Sweden | Publication of a national AI strategy |

| November 16, 2018 | Germany | Publication of a national AI strategy |

| March 2019 | Spain | Release of a national research, development, and innovation strategy in AI |

| March 14, 2019 | Denmark | Publication of a national AI strategy |

| March 14, 2019 | Lithuania | Publication of “Lithuanian Artificial Intelligence Strategy: A Vision of the Future” |

| March 18, 2019 | Belgium | Launch of the “AI4Belgium Initiative strategy” |

| May 6, 2019 | Czechia | Publication of a national AI strategy |

| May 24, 2019 | Luxembourg | Publication of a national AI strategy |

| June 11, 2019 | Portugal | Publication of the national AI strategy “AI Portugal 2030” |

| June 26, 2019 | Austria | Publication of “Artificial Intelligence Mission Austria 2030” |

| July 25, 2019 | Estonia | Publication of a short-term national AI strategy for 2019–2021 |

| August 21, 2019 | Poland | Launch of the Artificial Intelligence Development Policy for 2019–2027 |

| October 3, 2019 | Malta | Release of “A Strategy and Vision for Artificial Intelligence in Malta 2030” |

| October 9, 2019 | Netherlands | Publication of a “Strategic Action Plan for AI” |

| January 14, 2020 | Norway | Publication of a national strategy on AI |

France

France’s approach to AI was first delineated in a 2018 government-commissioned report (the Villani report) called “For a Meaningful Artificial Intelligence: Toward a French and European Strategy.”75 Noteworthy here is the use of the word “European” in the title. This strategic document crystalizes a comprehensive and forward-looking approach to AI that emphasizes more public research, resources, training, transfers, and innovation in four strategic sectors (healthcare, the environment, transportation and mobility, and defense and security.)76 Research on and the development of AI technologies is made interdisciplinary and inclusive by including social scientists, eliminating potential biases in algorithms, exploring the complementarity of humans and AI in human-machine interactions, and promoting gender equality in scientific and technical sectors.77

The strategy also recognizes the necessity of considering the European data ecosystem as a common good, in which public authorities should introduce “new ways of producing, sharing and governing data.”78 It stresses the need to prevent the brain drain of France’s leading experts in the field, making AI understandable to society at large, strengthening R&D in AI technologies in meaningful and ethical ways, and increasing the gender balance and diversity in the field. Macron has announced 1.5 billion euros of public funding into AI by 2022. He has also emphasized leading “the European way” in this field, though it is unclear what precisely he means by this and whether it means pursuing a middle ground between the approaches of the United States and China.79

France’s AI strategy focuses on four major challenges: reinforcing the ecosystem to attract the best talent, developing an open data policy particularly in sectors where the country is already competitive, creating a regulatory and financial framework that favors emerging AI businesses, and developing AI regulations with respect to ethics and acceptable standards for citizens.80 On the one hand, the centralized nature of France’s political system can enable government agencies to set the parameters for AI applications in certain areas. On the other hand, centralized control of innovation can in the long run impede progress, as AI requires a broad spectrum of R&D and applications across different fields. Additionally, though it is well known for having a strong skills base in science, technology, engineering, and mathematics (STEM), France lacks academic institutions and researchers actively and directly involved in AI research compared to the UK and Germany.

To meet the need for increased cooperation between industry actors and universities, France’s planned centers for AI excellence may help to bring together researchers, developers, and users to ensure that scientific progress translates into industrial applications. This could also make the French R&D landscape more attractive to top international talent. The Station F campus in Paris was created to enable AI startups to receive advice on legal issues and the impact of new AI technologies in France from thirty nearby public institutions. France’s push on AI is already showing signs of paying off. According to one report, France had raised $1.2 billion in investment for AI startups by the end of 2019,81 making it Europe’s frontrunner in AI funding ahead of the UK.82

The French strategy emphasizes ethical considerations related to AI (such as the implications of self-driving cars, facial/image recognition, and privacy) as well as inclusivity and diversity (such as a goal of reaching a 40 percent share of female students in “digital subject areas” by 2020)—areas that are deemed important in terms of sustainable growth and future societal resilience.83 The French strategy is detailed and outlines concrete steps for making the country more attractive to research, talent, and industry actors; how to improve transparency and AI cooperation between different actors; and how to integrate moral and ethical issues. It is one of the more ambitious European AI strategies: it includes more details than Germany’s, a timeline (unlike the UK’s strategy), and specific policies to achieve its goals.

The French AI strategy stands out for its government-led, top-down approach. This illustrates how much the government sees AI as strategically important. Yet it is unclear if this approach will create a sustainable ecosystem in which private sector–driven development and public-sector uptake will flourish. France’s focus on creating a European data ecosystem and Macron’s advocacy for a stronger EU could be positive for the continent’s AI landscape. An additional impetus for him is to position France as a destination for technology companies after Brexit. Companies like Google, Facebook, Uber, IBM, Samsung, and Microsoft have already opened or announced the creation of AI research centers in Paris.84

The UK

The UK’s AI strategy witnessed several major developments in 2018. These included the creation of new institutional structures such as the Office on AI and the Centre for Data Ethics and Innovation; the release of a new AI Sector Deal policy paper to spearhead cooperation between various governmental agencies and institutions, private companies, and academic centers; a large package of investment; and the publication of the report by the House of Lords’ AI Select Committee on the country’s new level of ambition in setting the agenda in ethical AI.85 In April 2018, the government announced an investment of nearly 1 billion pounds for its “AI Sector Deal,” made up of 603 million euros in new government, industry, and academic spending, and up to 342 million pounds in previously announced state funding.86 However, the document has been criticized for not setting a clear timeline for these investments.87

The “AI Sector Deal” paper focuses on the five foundations of the UK’s industrial strategy: ideas, people, infrastructure, the business environment, and places. It also sets out a vision to respond to challenges and opportunities presented by AI based on: making the country a global center for AI by investing in R&D, skills, and regulatory innovation; supporting sectors to boost productivity through AI and data analytics; leading the world in the safe and ethical use of data and strengthening digital capabilities by establishing a Centre for Data Ethics and Innovation; and helping people develop the skills needed for the jobs of the future.88 The policy paper was a response to a report on the country’s industry and its potential to remain at the forefront of AI development and use and retain its world-leading status.89

These governmental initiatives aim to build a more coherent national narrative on AI and to rationalize existing thinly dispersed and uncoordinated institutional initiatives across various technological domains such as AI, autonomous systems, and robotics so as to meet the UK’s stated ambition to become a world leader in these sectors.90 While the country’s AI research is influential globally and while London has the highest concentration of AI startups in Europe as well as a strong ability to attract international investment in start-ups, the commercialization of research traditionally is a weakness for the UK.91 It remains to be seen whether the “AI Sector Deal” will help fix this shortcoming by spurring cooperation between the private sector and academia. While the UK strategy does a fine job of identifying national strengths and outlining areas where targeted investments are needed to address weaknesses, the absence of a clear timeline for any of these investments is notable.

Additionally, with the possibility looming that the country may not reach a post-Brexit relationship agreement with the EU, it is unclear how the UK will continue to attract talent and investment from Europe, and the “AI Sector Deal” does not address this eventuality. Conversely, the UK accounts for nearly one-fifth of AI researchers in the EU, it is only behind the Netherlands in terms of the quality of AI research papers produced, it has a tradition of leading the EU in collaborating with third countries, and nearly 40 percent of European AI firms that have received at least $1 million in funding are based in the country.92 Nevertheless, with France eager to create a European data ecosystem and with Brexit potentially causing international technology companies to move from London to the rest of Europe, the UK may lose out not only on EU research funding but also on access to the European data pool. Similarly, collaboration between UK universities and European counterparts will suffer as they lose out on lucrative EU-funded partnerships and grants. While the “AI Sector Deal” is well thought-out and has broad support from industry, academia, and the government, it is unclear if it will be able to prevent or mitigate the potentially damaging ripple effects Brexit will have on the country’s AI landscape.

Germany

Significantly shorter than its French equivalent, Germany’s AI strategy sets out twelve areas to address by 2025.93 These include: making Germany and Europe a leader in AI research to excel in future innovation, setting up innovation competitions and European innovation clusters, improving AI-related technology transfers to the economy and the middle class, establishing incentives for investors and founders of AI startups, promoting digital skills and AI-related education, and improving talent attraction and retention. From 2019 to 2025, the country aims to spend 500 million euros annually in support of these goals, which is more money over a longer timeframe than France’s AI funding.94

The strategy emphasizes having government administrators employ AI to offer better and more efficient services for citizens; making data available and facilitating its use; adjusting the country’s legislative framework to include algorithms and AI-based decisions, services, and products; establishing AI standards and norms on national, European, and international levels; ensuring national and international cooperation on AI-related developments; and establishing a broad public dialogue and encouraging political participation to incorporate AI into society in ways that the public deems ethically, legally, and institutionally correct. In addition, in October 2019, the Data Ethics Commission released guidelines for the development and use of AI, guidelines that became a model for the EU’s white paper.95

Germany seems committed to pursuing the responsible development and use of AI. It builds on the strengths of its economy and aims to expand them by improving the transfer of innovations into industry. Its strategy has a wide scope and allocates more funding to AI than other European strategies, but it does not go into many details or the specific allocation of funds to meet its twelve stated priorities. The strategy’s focus on research volume and technology transfers ignores the fact that Germany is late to the AI game and does not have innovation hot spots like Cambridge or Zurich.96 The German Research Center for Artificial Intelligence, however, is considered the world’s largest nonprofit contract research institute for software technology based on AI.97 Additionally, the majority of German universities are not allowed to pay salaries comparable to what top researchers might receive in the United States or China, which makes the prospect of attracting talent attraction even more difficult—an issue the strategy largely ignored.98

Sweden

Sweden’s “National Approach for Artificial Intelligence,” released in May 2018, outlines what is needed for the country to be at the forefront of AI development and use.99 While it does not include specific policies on how to achieve its stated objectives, the goals of the government include: developing standards and principles for safe, sustainable, and ethical AI; improving the digital infrastructure to leverage existing opportunities; increasing access to data; and playing an active role within the EU efforts. The strategy stresses the country’s lack of skilled AI professionals and the need to increase basic and applied AI research within a legal framework that ensures sustainable (defined as ethical, reliable, safe, and transparent) AI development.

The government has since implemented several targeted initiatives to achieve its goals and has made good progress. Nonetheless, the strategy could benefit from the formulation of specific policies and the commitment of AI-designated funds to strategic initiatives. To further guide the country’s efforts and to promote a Swedish model for AI, a national AI council—similar to the one in Finland—has been established to bring together private-sector representatives, academics, and other experts.100

Since May 2018, the government has invested around 3.7 million euros in several universities to help train AI professionals.101 It also launched an AI Data Factory and Arena at the Lindholmen Science Park in Gothenburg to enable collaboration and strengthen Swedish companies.102 Since then, it has developed into a national center for AI called AI Innovation of Sweden, bringing together some fifty different partners.103 The Swedish innovation agency, Vinnova, has also launched several AI-related projects, such as e-healthcare systems for home care, use of AI in breast cancer screenings, and AI-controlled vehicles in mining operations.104

Another player is the government-funded research institute Rise, which has some sixty employees working on AI-related projects.105 Besides government funding for AI, Sweden also benefits from strong investment from the private sector and a strong innovation climate with successful incubators and access to venture capital. In particular, the Wallenberg AI, Autonomous Systems and Software Program (WASP) plans to generate 520 million euros by 2029 toward AI research in Sweden.106 WASP includes some forty Swedish companies and academic institutions, and it focuses on machine learning, deep learning, and explainable AI.107 Another notable commercial effort is Zenuity, a joint venture led by Volvo Cars to provide SEK with 95 million euros for research into self-driving cars.108

Finland

Finland released “Finland’s Age of Artificial Intelligence” AI strategy in December 2017.109 It takes a more bottom-up approach to AI than most other European countries and was produced by a working group on artificial intelligence. Some major intellectual and policy outputs have been the August 2018 report “Work in the Age of Artificial Intelligence—Four Perspectives on the Economy, Employment, Skills and Ethics” produced by a working group on the transformation of society and work and the June 2019 final report of Finland’s Artificial Intelligence Program called “Leading the Way Into the Era of Artificial Intelligence.”110 To assist in drafting an AI development strategy and to provide advice to the government, the Ministry of Economic Affairs and Employment established a national steering group headed by former president of Nokia Pekka Ala-Pietilä, who now chairs the EU High-Level Expert Group on AI (AI HLEG).111

While the strategies of other countries focus primarily on either talent or upskilling with regard to AI, Finland’s stands out by emphasizing the need to “train, retain and attract AI talent through stronger investment and enhanced visibility of Finnish AI expertise” as AI transforms society.112 The Finnish approach to staying competitive globally in AI is to train the population and educate it on the potential impacts on society. According to Minister of Economic Affairs Mika Lintilä, the government acknowledges that Finland does not have the resource advantage that bigger countries have, so the goal is to become a leader in “practical applications of AI.”113 A good example of this approach to educating citizens is “Elements of AI,” a free and first-of-its-kind online course designed to raise AI literacy and to be accessible to all for training purposes.114 Recent efforts to educate the population also include the 1 percent scheme, which focuses on teaching 1 percent of the population (about 55,000 people) the basics of AI, while slowly increasing the number of people trained as the years go on.115

One aspect of Finland’s strategy that has been praised is the inclusion of a SWOT (strengths, weaknesses, opportunities, and threats) analysis. In its guidelines for creating an AI national strategy, the World Economic Forum highlights that the process should begin with such an assessment as it will help keep the strategic goals in line with what a given “country requires in terms of demographic needs, strategic priorities, urgent concerns, the aspirations of its citizens, its resource constraints and geopolitical consequences.”116

Further steps the government intends to take include creating a plan for bringing small and medium-sized enterprises (SMEs) on board, in addition to plans for Finland to partner with Estonia and Sweden in an effort to form Europe’s top laboratory for AI test trials.117 Finland’s goals in AI are part of a bigger strategic vision for the country. As Ilona Lundström, a director general at the Ministry of Economic Affairs and Employment and one of the masterminds behind the AI strategy, states: “We are using AI as the flagship project for a bigger kind of setup of themes of digitalization.”118 Recently, Finland announced plans to offer its online course on AI to all EU citizens free of charge.119

Estonia

As a pioneer in e-governance and one of the most digitally advanced countries in the world, Estonia has a strong technological foundation. The country also has an impressive history of producing unicorn start-ups—or privately owned companies valued at over $1 billion each—including Skype, Playtech, TransferWise, and Bolt—though at least one of these firms has since been acquired by a publicly traded corporation.120 The government is now seeking to build on its tech-savvy society with the help of AI. Estonian experts assessed the ways in which the private and public sectors could engage more with AI in the 2019 Kratt report.121 (Experts decided to refer to AI as Kratt, an Estonian mythical creature that is “devoted to serving its master but can become bad if left idle.”)122 Its proposals informed a short-term (2019–2021) AI strategy. The government plans to invest at least 10 million euros to implement the strategy and aims to produce a long-term plan in the near future.123 Estonia strongly emphasizes public-sector adaptation, increased investment, R&D, and the promotion of ethical and trustworthy AI. Its short-term approach might be beneficial as a test case for deriving best practices and lessons to inform a more long-term strategic approach.

The strategy also places more emphasis on AI in the public sector, where Estonia strives to have a competitive edge as it believes this aspect of AI receives the least attention from the rest of the world.124 The government’s goal of applying AI solutions in the public sector is for it to “increase the user-centeredness of services, improve the process of data analysis, and make the country work more efficiently.”125 The country also already has been actively implementing AI across society. In late 2019, the country introduced its first component of AI-based applications with a text-analysis tool126 that has been embedded into the government’s public code repository, which makes software solutions built for the public more effective and accessible. Additionally, according to the government, since October 2019, there are at least twenty-three AI solutions deployed in the public sector, with the goal of having at least fifty AI use cases by the end of 2020.127 One of these solutions includes the use of predictive analytics to help decide where to send police officers for directing traffic. The most ambitious project planned is an “AI Judge” that will help decide some small-claims cases in court.128

Czechia

Czechia released its national strategy for AI in May 2019.129 Largely in line with the European Commission’s “Coordinated Plan on Artificial Intelligence,” the Czechs’ national approach seeks opportunities for deeper engagement with EU-level initiatives and aims to make the country an innovation leader in the field. The strategy splits its objectives into three parts: short term (by 2021), medium term (by 2027), and long term (by 2035).

Similar to the EU-level and national strategies of other members states listed above, Czechia aims to develop responsible and trusted AI in accordance with EU guidelines, invest in R&D, and support startups and identify opportunities for economic growth by increasing employment and upskilling workers. Recently the Czech Institute of Informatics, Robotics and Cybernetics has been given the opportunity to establish a European Center of Excellence for Industrial Robotics and Artificial Intelligence, with almost 50 million euros in startup support from the Research and Innovation Center on Advanced Industrial Production.130 Czechia showcases the EU’s potential to set a certain strategic vision for member states for developing trustworthy AI, implementing EU-wide guidelines, and creating added value when it comes to a common European approach.

Other European National Efforts

Other European countries that have recently published AI strategies include Austria, Belgium, Denmark, Italy, Lithuania, Luxembourg, Malta, Norway, Poland, Portugal, and Spain.131 Meanwhile, several other countries are working on one. In addition, Austria, Ireland, and Italy have established national AI task forces. Portugal, Romania, and Spain have included AI in their national digitization strategies. Most of these initiatives emphasize, among other things, strengthening national research as the basis of AI; setting up AI centers; committing to supporting industry and SMEs; and improving data sharing between the public, industry actors, and the public sector.132

National AI Strategies: More Than the Sum of Their Parts?

EU member states’ individual national strategies sometimes contain references to the EU’s collective AI plan, but some have also impacted the EU strategy. While Europe’s diverse AI strategies all tend to focus on labor-market impacts, many of them also stress ethical challenges in the commercialization, scaling, and research of AI. The fact that EU member states have very different levels of readiness—as some member states do not yet have AI strategies while others have fully developed AI plans—demonstrates further the fragmentation of the European digital market, with Northern European countries generally leading the way, while Southern and Eastern European ones tending to lag behind (with some notable exceptions).133

While many of the European national strategies recognize AI as an engine of economic growth and accelerator of digital change, they hardly share a common definition of what constitutes AI. This matters from a regulatory point of view as AI might run the risk of becoming a definitional moving target, especially in view of a future EU regulatory framework and the design of any new legal instruments. Moreover, any common definition would need to be flexible enough to capture the evolving nature of the technology while providing enough legal clarity for enforcement.

The emphasis on ethical and sustainability issues in the national strategies also reflects a shared understanding of the risks (societal, economic, or security) that AI entails. The broad goals set out in many of these strategies seek to impact different levels of society and the economy at once, a state of affairs that increases the complexity of policies required to achieve these goals as well as the difficulty of measuring success. Because of their fuzzy definitions of AI, some European strategies also lack measurable goals and benchmarks (although these are inherently difficult to craft.)

The European strategies differ most from non-European ones in their heavy focus on ethical, trustworthy, and sustainable development and use of AI systems and services. This normative framing prioritizing a human-centric approach to AI—if further clarified, deepened, and supported by legal certainty—could provide a much-needed competitive advantage for European AI products and services by inspiring more consumer confidence and by providing a roadmap for the regulation of such products. At the same time, the goal should be to avoid hollow rhetorical declarations or overregulation that could impede innovation, commercialization, and uptake. In particular, uncertainty and vague declarations can risk scaring away startups and keep venture capitalists from investing in Europe.

In addition to strictly national efforts, there are also positive examples of cooperative agreements on AI between individual member states—such as the ones between France and Germany and between France and Finland. These agreements are important examples of member states working bilaterally to strengthen the EU’s cross-border cooperation in AI. New cooperation platforms with relevance for AI innovation between European countries are also emerging. One prominent example is the Franco-German Joint European Disruptive Initiative (JEDI) launched in 2018. Modeled after the U.S. Defense Advanced Research Projects Agency (DARPA), this body will identify and support technological challenges likely to disrupt existing industries. For 2018, JEDI was allocated an initial budget of 235 million euros with the goal of increasing funding to 1 billion euros a year once the initiative is fully operational.134

Assessing the EU’s Approach To AI

In addition to national-level efforts, the EU has developed policies and funding opportunities to help advance Europe’s role on AI in recent years.

EU Funding for AI Research and Development

One major role the EU plays is through its funding mechanisms. As part of the EU budget now being negotiated, the multiannual financial framework for 2021–2027, the European Commission has proposed a Digital Europe Program as part of the Single Market, Innovation and Digital chapter. This puts forward 9.2 billion euros for investments in high-performance computing and data, AI, cybersecurity, and advanced digital skills.135 By building on the Digital Single Market Strategy that the European Commission launched in 2015, the goal is to prepare citizens for the digital age and to boost the digitalization of Europe. Since 2004, AI has been included in EU funding for R&D, with a heavy focus on robotics. In the 2014–2020 period, investments in robotics increased by 700 million euros.136 This came on top of 2.1 billion euros in private investment in the EU research program called the Partnership for Robotics in Europe, or SPARC euRobotics.137 It is still unclear how the economic fallout from the coronavirus pandemic will affect the size and priorities of the long-term EU budget.

Under the current EU budget (2014–2020), the European Structural and Investment Funds provide 27 billion euros on skills development, out of which the European Social Fund invests 2.3 billion euros in digital skills.138 The AI4EU project—a grant agreement between the European Commission and seventy-nine private-sector and academic institutions in twenty-one member states—was launched in January 2019.139 It aims to mobilize and promote the European AI ecosystem and provide access to essential AI resources for all users in the EU. The 20-million-euro project will run for three years and will try to establish a network of AI knowledge, tools, and research across the EU. The European Investment Fund has promised to make available 100 million euros in 2020 to support promising AI startups.140 Jean-Eric Paquet, the European Commission’s director general for research and innovation, has said that the fund will seek to close the investment gap the EU faces “by providing equity and grant funding to early stage firms in so-called deep tech, such as manufacturing, biotechnology, health-tech and artificial intelligence.”141 This allocation may be followed by a 3.5 billion euro investment fund in 2021, which will invest in early-stage technology in an effort to increase innovation.142

Between 2014 and 2017, the EU invested around 1.1 billion euros in AI-related research under the multi-annual R&D funding program Horizon 2020.143 Under Horizon Europe, Horizon 2020’s successor, the European Commission proposes to invest 100 billion euros for research and innovation to strengthen the EU’s scientific and technological bases; boost its innovation capacity, competitiveness, and jobs; and deliver on citizens’ priorities and sustain Europe’s socioeconomic model and values.144 The strategic planning process will focus in particular on the pillar addressing global challenges and European industrial competitiveness, which includes a digital and industry cluster with key digital technologies, AI and robotics, and advanced computing and big data as key areas of intervention.

The new European Commission has made investing in AI a top priority as part of its efforts to strengthen the EU’s digital sovereignty, so that users will have more choice and control over which IT products and services they use. The February 2020 white paper on AI notes that in the last three years “EU funding for research and innovation for AI has risen to 1.5 billion [euros], i.e. a 70% increase compared to the previous period,” but it equally points out that “investment in research and innovation in Europe is still a fraction of the public and private investment in other regions of the world.” More precisely, it notes that “3.2 billion [euros] were invested in AI in Europe in 2016, compared to around 12.1 billion [euros] in North America and 6.5 billion [euros] in Asia.”145

To bridge such a gap, the European Commission plans to revise the December 2018 “Coordinated Plan on Artificial Intelligence” by the end of 2020 as a follow-up to the public consultation on the white paper. To help fulfill the white paper’s recommendation to lay the groundwork for increased coordination with EU member states, it plans to attract over 20 billion euros in annual investment in AI in the EU over the next decade.146 As far as SMEs and startups are concerned, the European Commission and the European Investment Fund will launch a “pilot scheme of 100 million [euros] in Q1 2020 to provide equity financing for innovative developments in AI.”147 The negative economic fallout from the coronavirus pandemic raises questions about how much money the next multiannual financial framework can allocate toward AI R&D given potential budget cuts and diverging priorities. The European Commission has recently identified AI as one of the core areas where the EU needs to invest more as part of the post-pandemic economic recovery.148

The European Commission’s Evolving Approach

Besides providing funding for R&D, the European Commission has sought to shape a common approach to AI in other ways (see table 2).

| Table 2: Timeline for the EU’s AI Strategy |

| April 10, 2018 | Member states sign a “Declaration of Cooperation on Artificial Intelligence.”

They agree to work together on the most important issues raised by AI, from ensuring competitiveness in R&D to dealing with the resulting social, economic, ethical, and legal questions |

| April 25, 2018 | The European Commission adopts the “Communication on Artificial Intelligence.”

This document lays out the EU’s approach to AI. It is characterized by its unique emphasis on ethical AI and aims to increase the EU’s technological and industrial capacity as well as AI uptake by the public and private sectors, to prepare Europeans for the socioeconomic changes brought about by AI, and to ensure that an appropriate ethical and legal framework is in place. |

| June 14, 2018 | The European Commission appoints the AI HLEG.

Consisting of fifty-two experts on AI from academia, civil society, and business, the group advises the European Commission on the implementation of its AI strategy. |

| December 7, 2018 | The European Commission presents a “Coordinated Plan on AI.”

Prepared with member states to foster the development and use of AI in Europe, this document notes that the EU is lagging behind in private investments and “risks losing out on the opportunities offered by AI” without significant efforts.

The plan focuses on the need for strengthened cooperation among all involved parties in key areas: encouraging more investment and financing for startups and innovative SMEs; increasing excellence in trustworthy AI technologies and the broad diffusion of such technologies; adapting learning and training programs and systems to better prepare society for AI; building a European data space for AI for Europe; developing ethical guidelines, while also ensuring an innovation-friendly legal framework; and tackling security-related aspects of AI applications.149 |

| January 9, 2019 | The AI4EU project launches.

The AI4EU project brings together seventy-nine top research institutes, SMEs, and large enterprises in twenty-one countries to build a focal point for AI resources, including data repositories, computing power, tools, and algorithms.150 It aims to offer services and provide support to potential users of the technology as well as help them test and integrate AI solutions in their processes, products and services. |

| April 8, 2019 | The HLEG publishes the “Ethics Guidelines for Trustworthy AI.”

The guidelines put forward a human-centric approach to AI and list seven key requirements that AI systems should meet in order to be trustworthy.151 |

| April 8, 2019 | The European Commission issues a “Communication on Building Trust in Human Centric AI.”

This indicates the seven requirements that all AI applications should comply with to be considered trustworthy: human agency and oversight; technical robustness and safety; privacy and data governance; transparency; diversity, nondiscrimination, and fairness; societal and environmental well-being; and accountability.152 The principles identified in the communication come from the HLEG with the primary aim of drafting AI ethics guidelines based on the existing regulatory framework, which should be applied by all developers, suppliers, and users of AI. |

| June 26, 2019 | The HLEG publishes “Policy and Investment Recommendations for Trustworthy Artificial Intelligence.”

This document puts forward thirty-three recommendations that can guide trustworthy AI toward sustainability, growth and competitiveness, as well as inclusion—while empowering, benefiting and protecting human beings.

The recommendations aim to help the European Commission and member states to update the coordinated plan by the end of 2019.153 |

| June 26, 2019 | The HLEG launches the pilot phase of the “Assessment List of the Ethics Guidelines for Trustworthy AI,” which is to last until December 1, 2019. |

| February 19, 2020 | The European Commission releases the “White Paper on Artificial Intelligence—A European Approach to Excellence and Trust,” a “European Strategy for Data,” and “A Strategy for Europe Fit for the Digital Age.”

To achieve an ecosystem of excellence, the European Commission proposes to streamline research, foster collaboration between member states, and increase investment in AI development and deployment. These actions build on the coordinated plan on AI from December 2018.

To achieve an ecosystem of trust, the European Commission presents options on creating a legal framework that addresses the risks for fundamental rights and safety. This builds on the Ethics Guidelines for Trustworthy AI, which were tested by companies in late 2019. A legal framework should be principles-based and focus on high-risk AI systems to avoid unnecessary obstacles to innovating companies.

The European Commission conducted a public consultation on the white paper until May 31, 2020, and it plans to present proposals for a regulatory framework in December 2020. |

An early effort came in April 2018 when twenty-four member states and Norway agreed on a “declaration of cooperation on artificial intelligence.”154 Since then, the other members and the UK have also signed on to this statement.155 The nonbinding declaration called for “a comprehensive and integrated European approach on AI” and included language on cooperation to boost the EU’s technological and industrial capacity by improving access to public-sector data, addressing socioeconomic challenges, and ensuring an adequate legal and ethical framework.

This communication mentions the new threats AI pose before discussing the opportunities. This is in sharp contrast to other countries’ emphasis on opportunities, though this is hardly surprising given the purpose of the EU document is to justify future regulations. In 2018, Mariya Gabriel, then commissioner for digital economy and society, said the “enhanced cooperation efforts will focus on reinforcing European AI research centers, creating synergies in R&D&I [research and development and innovation] funding schemes across Europe, and exchanging views on the impact of AI on society and the economy.”156 The group has engaged in a continuous dialogue with the European Commission, which acts as a facilitator, to promote a new framework for collaboration between countries. The declaration illustrates the commitment of member states to advancing the EU’s role in AI and enhancing coordination among themselves.

Simultaneously in 2018, the European Commission put forward more concrete ideas about a European approach in its “Communication on AI.”157 This was based on three pillars that then formed the backbone for the subsequent coordinated action plan on AI.158 The first pillar is keeping ahead of technological developments and encouraging acceptance of AI by the public and private sectors. The second pillar is to address socioeconomic changes brought about by AI through, for instance, efforts to attract AI talent, support digital skills and STEM education development, and encourage member states to modernize their education and training systems. The third pillar is to ensure an appropriate ethical and legal framework and legal clarity for AI by developing ethics guidelines and guidance on the interpretation of the EU Product Liability Directive with regard to AI.

Building on the “Communication on AI,” the European Commission released a “Coordinated Action Plan” in December 2018.159 This nonbinding document emphasizes that stronger coordination is essential for Europe “to become the world-leading region for developing and deploying cutting-edge, ethical and secure AI.” It proposes joint actions for closer and more efficient cooperation between the member states, Norway, Switzerland, and the European Commission in four key areas.160 The first area is maximizing investment through increased coordination and partnerships. Joint actions to achieve this goal include: all member states having AI strategies in place by mid-2019, a new EU-AI public-private partnership, a new AI scale-up fund for startups and innovators, and the development of world-leading AI centers (through digital innovation hubs and the EU Innovation Council pilot initiative.)161 The second area is creating European data spaces to make more data available and help share this data seamlessly across borders while complying with the GDPR. As part of this effort, a support center for data sharing went live in July 2019. The third area is fostering talent, skills, and lifelong learning. Measures include supporting advanced AI-related degrees through dedicated scholarships, supporting digital skills and learning, ensuring AI’s presence in education programs to ensure human-centered AI development, and attracting talent through the Blue Card system. The fourth area is developing ethical AI and ensuring trust. The aim here is develop AI technology that respects fundamental rights and ethical rules with the ambition to bring this ethics-driven approach to the global stage.

At the time, then vice-president for the digital single market Andrus Ansip noted that the EU would work to pool data and coordinate investments with the aim of reaching at least 20 billion euros in private and public investments by the end of 2020.162 Commissioner for Digital Economy and Society Mariya Gabriel added that the coordination action plan would help Europe compete better globally “while safeguarding trust and respecting ethical values.”163 Its full implementation will be a key task for the current European Commission, which will serve until 2024, and will be contingent on funding as part of the next multiannual financial framework.

The High-Level Expert Group on AI

To support the articulation of a European strategy, the European Commission established the AI HLEG in June 2018, which consists of fifty-two figures from academia, the private sector, and civil society.164 The group launched a consultation process in December 2018, and in April 2019 it published “Ethics Guidelines for Trustworthy Artificial Intelligence,” outlining the EU’s approach to set ethical guidelines and increase investment in AI.165 The guidelines define “trustworthy AI” as having three principal components: lawfulness, ethical adherence, and robustness.166 The guidelines include many of the common themes in member states’ strategies, such as transparency, safety, and fairness and nondiscrimination, but they also cover some issues less often associated with AI such as the environment.

Additionally, the guidelines list seven key requirements based on fundamental rights and ethical principles that AI systems should meet to be considered trustworthy. These are: human agency and oversight; technical robustness and safety; privacy and data governance; transparency; diversity, nondiscrimination and fairness; societal and environmental well-being; and accountability. The HLEG states that this “trustworthy approach” is key to enabling what it calls “responsible competitiveness” by providing a foundation of trust for those who will be affected by AI systems. While the guidelines were a good first step in the right direction for what the EU wants to accomplish, the fact that they are nonbinding may limit their usefulness as self-regulation seems unlikely to work (as seen in dealing with privacy issues).167 Companies and government entities using AI technology should be accountable to laws and not merely self-regulation or industry codes of ethics so that consumers can understand and potentially challenge decisions made using AI.

In June 2019, the HLEG published a document called “Policy and Investment Recommendations for Trustworthy Artificial Intelligence.”168 This document calls for significant new investment and resources dedicated to transforming the regulatory and investment environment for trustworthy AI in Europe. The plan consists of thirty-three recommendations to guide trustworthy AI toward “sustainability, growth and competitiveness, as well as inclusion—while empowering, benefiting and protecting human beings.” These recommendations are directed at a wide range of actors, including governments, SMEs, and academia.