- +21

Hadrien Pouget, Claire Dennis, Jon Bateman, …

{

"authors": [

"Hadrien Pouget"

],

"type": "commentary",

"centerAffiliationAll": "dc",

"centers": [

"Carnegie Endowment for International Peace"

],

"collections": [],

"englishNewsletterAll": "ctw",

"nonEnglishNewsletterAll": "",

"primaryCenter": "Carnegie Endowment for International Peace",

"programAffiliation": "TIA",

"programs": [

"Technology and International Affairs"

],

"projects": [],

"regions": [

"North America",

"United States",

"Eastern Europe",

"Western Europe",

"Iran"

],

"topics": [

"Global Governance",

"Technology",

"AI"

]

}

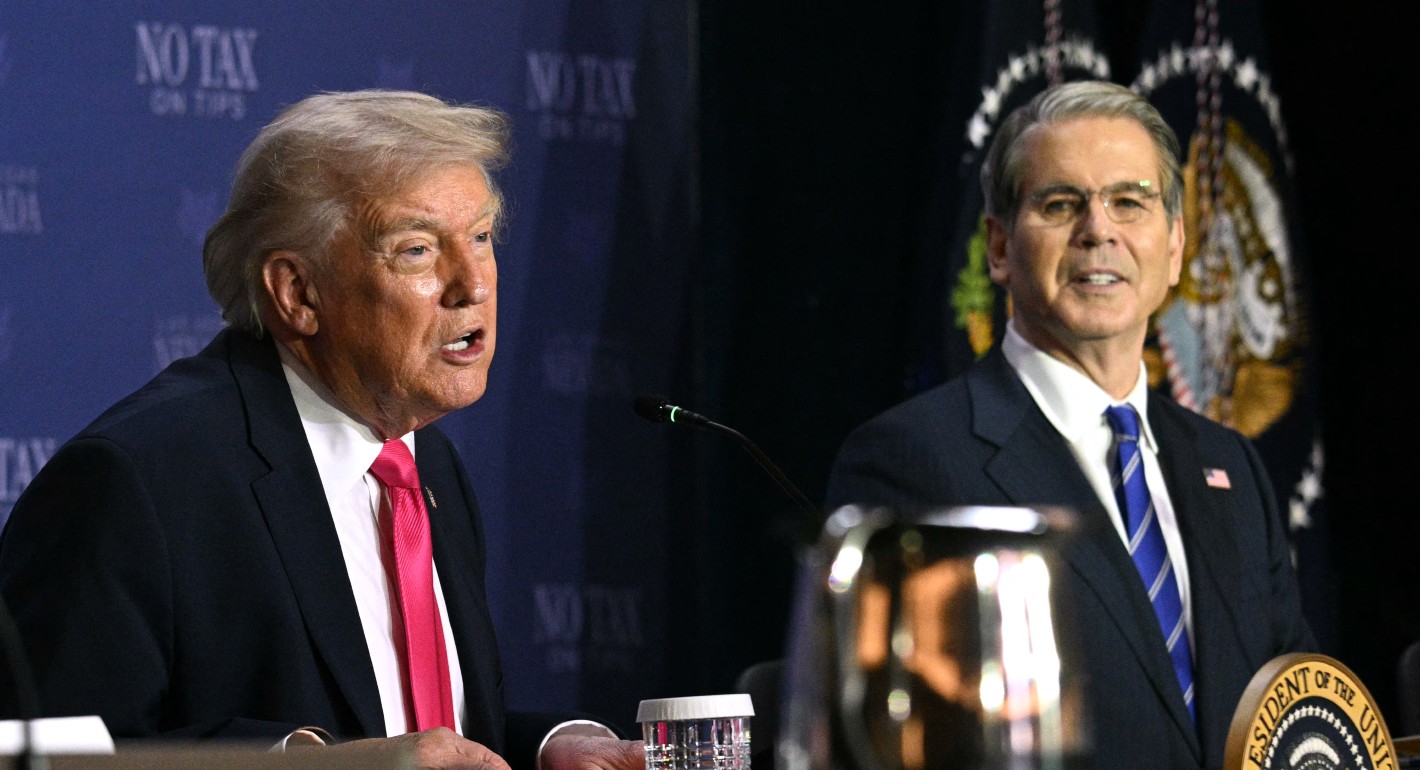

Source: Getty

Biden’s AI Order Is Much-Needed Assurance for the EU

It shows that Washington is an active partner in regulating advanced AI systems.

On Monday, President Joe Biden’s administration released a first-of-its-kind executive order (EO) tackling the risks from advanced AI systems and requiring those developing such systems to share information with the government and initiating the development of standards.

The EO is not just important domestically—it is also a signal to the international community that the United States intends to take action on AI governance. This signal is especially loud for the EU, which is in the final stages of negotiating its sweeping AI legislation, the AI Act. The regulation of advanced AI systems is one of the final controversies holding up the proposal, with some member states concerned that unilaterally imposing regulations in the EU would curtail the growth of the region’s own industry and favor U.S. companies. Confidence in the United States as a partner in regulation could help assuage those concerns, and details from the EO are a useful reference point, simplifying the EU’s negotiations and setting the scene for international cooperation on governance.

Since the first draft of the AI Act was introduced in April 2021, the EU has sought to lead the global conversation on AI governance. It was well placed to do so, regulating one of the world’s three largest markets and proposing legislation that would be the broadest regulation yet of this technology. When ChatGPT was released in November 2022 and brought the impressive capabilities of large, general models into the public eye, the EU responded by including these advanced AI systems in the scope of the act. Dubbed “foundation models,” a category intended to cover OpenAI’s GPT-4 and Anthropic’s Claude, these systems would have a unique set of requirements to adhere to.

However, where the EU was generally happy to move ahead of the world on AI legislation for other issues, regulation of foundation models proved to be more controversial. Hoping to cultivate their own powerful AI systems with startups such as Mistral AI (from France) and Aleph Alpha (from Germany), some member states worry about the legislation’s impact on their nascent industries. Calls for stronger requirements were seen as being led by dominant U.S. AI companies that hoped to entrench their lead by building a regulatory moat. Skepticism that the United States would take any meaningful legislative or regulatory action against its own AI industry has further diluted motivation to act for some in the EU. Nevertheless, many in the EU still want to impose requirements, and negotiators are now struggling to agree on the intensity of the requirements for foundation models.

At the same time, the EU is under pressure to finalize the act soon. Without a final position, EU member states are left in limbo when operating on the international scene, where efforts are proliferating. The UK’s AI Safety Summit will start on Wednesday, the G7 has released a code of conduct for those developing advanced AI systems, and the UN has announced a high-level advisory body on AI to explore different courses of action. In addition, if the act is not finalized before the end of the year, it could be pushed back to late 2024 as the European Parliament’s elections take center stage.

Against this backdrop, the EO helps the EU in two ways. First, the order demonstrates to the EU that it will not be alone in imposing restrictions on advanced AI systems. The Biden administration’s voluntary commitments signed by leading AI labs were cold comfort to Europeans. By contrast, the EO represents a move toward enforceable requirements from the U.S. government and shows that Washington is willing to restrict U.S. AI companies. It is still not perfect—the EO must work within the U.S. government’s existing authorities and is limited in the kinds of restrictions it can impose—but it’s an important step. New legislation from Congress remains crucial for the future.

Second, the details of the executive order will be useful. Like others, the EU is still grappling with this new and fast-moving technology and struggling to define which advanced AI systems should be regulated and how. The definitions and categories established in the EO could be a helpful reference (including a technical definition for “potential dual-use” AI), and the list of information the EO requires from AI companies under the Defense Production Act could also be mirrored by the EU’s legislation. More generally, the EO remains flexible in its definitions and sets up several processes to refine them and establish precise requirements in different sectors. The EU could act similarly by setting up the legislative structure for requirements while leaving the details to be filled out in the future via “delegated” or “implementing” acts—EU legislative tools designed to do exactly this. It could then work with the United States and other international partners to develop effective standards.

The EO is not a blueprint for EU action, and because it is not legislation, it is not the U.S. version of the AI Act. However, the message it sends and the approaches it takes are still important. Whatever requirements the EU ultimately puts in place won’t be in perfect alignment with the EO—they’ll have a unique EU spin—but this executive order at least signals to EU member states that they’re not acting alone.

About the Author

Former Associate Fellow, Technology and International Affairs Program

Hadrien Pouget was an associate fellow in the Technology and International Affairs Program at the Carnegie Endowment for International Peace.

- The Future of International Scientific Assessments of AI’s RisksPaper

- France’s AI Summit Is a Chance to Reshape Global Narratives on AICommentary

Hadrien Pouget

Recent Work

Carnegie does not take institutional positions on public policy issues; the views represented herein are those of the author(s) and do not necessarily reflect the views of Carnegie, its staff, or its trustees.

More Work from Carnegie Endowment for International Peace

- Trump’s AI Order Won’t Stymie U.S. Competition with ChinaCommentary

Beijing regulated AI—and then Chinese AI companies took off.

Matt Sheehan

- Are Data Centers the Villains in the Battle Over Electricity?Commentary

Examples from Virginia and Lake Tahoe reveal complex situations that governments could use to fund critical grid upgrades.

Kate Gordon, Noah Gordon

- Political Violence in the U.S.Commentary

What is political violence and what works to reduce it.

Political Violence Researchers, Rachel Kleinfeld, ed., Dalya Berkowitz, ed.

- Beyond the Hype: Assessing Hyperscaler Nuclear Commitments Against U.S. Energy RealitiesPaper

The coming decade will require technology companies to decide how nuclear fits into their energy strategies—and grapple with the obligations that follow.

John Pendleton, Mackenzie Schuessler

- Is Belarus Really Set to Return to the Ukraine War?Commentary

By reminding the world that Lukashenko is a threat to NATO and Ukraine, Kyiv is trying to return the focus to why the Belarusian regime needs to be contained rather than rewarded.

Artyom Shraibman